- Home

- Technology

- Mamba-3: The Next Evolution in State Space Models

Mamba-3: The Next Evolution in State Space Models

Mamba-3 transforms AI sequence processing with unprecedented efficiency gains. Learn how this state space model outperforms transformers while using fewer resources.

State Space Models: How Mamba-3 Is Reshaping AI Sequential Data Processing

Learn more about ghostling: the ai tool transforming digital communication

State space models are reshaping how artificial intelligence processes sequential data. Mamba-3 represents the latest breakthrough in this rapidly evolving field, offering unprecedented efficiency gains over traditional transformer architectures. As AI systems demand more computational resources, researchers are racing to develop alternatives that maintain accuracy while reducing costs.

The emergence of Mamba-3 signals a pivotal shift in deep learning architecture design. This third-generation model addresses critical limitations that plagued earlier versions while introducing novel mechanisms for handling long-range dependencies in data.

What Makes Mamba-3 Different from Previous Models?

Mamba-3 builds upon the foundation laid by its predecessors but introduces significant architectural improvements. The model incorporates enhanced selective state space mechanisms that dynamically filter information based on context. This approach allows the system to process sequences more efficiently than traditional attention-based models.

The architecture features a hybrid design that combines the best elements of state space models with selective gating mechanisms. Unlike Mamba-2, which struggled with certain types of sequential patterns, Mamba-3 handles diverse data structures with remarkable consistency. The model achieves this through improved parameter initialization and optimized training procedures.

Key Technical Innovations in Mamba-3

Mamba-3 introduces several groundbreaking features:

- Adaptive state compression: Reduces memory footprint by up to 40% compared to Mamba-2

- Multi-scale temporal processing: Handles both short-term and long-term dependencies simultaneously

- Hardware-optimized kernels: Leverages modern GPU architectures for faster inference

- Dynamic routing mechanisms: Allocates computational resources based on input complexity

- Enhanced stability training: Prevents gradient issues during extended sequence processing

These improvements translate to real-world performance gains. Benchmark tests show Mamba-3 processes sequences up to 3x faster than comparable transformer models while using 60% less memory.

How Does Mamba-3 Handle Long Sequences?

For a deep dive on three ways ai is learning to understand the physical world, see our full guide

Long-context processing has historically challenged AI models. Transformers face quadratic scaling issues as sequence length increases, making them impractical for documents exceeding several thousand tokens.

Mamba-3 solves this through linear-time complexity, enabling efficient processing of sequences containing hundreds of thousands of elements. The model employs a selective scanning algorithm that identifies and prioritizes relevant information.

For a deep dive on how puppeteer james ortiz brought rocky to life in projec..., see our full guide

This mechanism mimics human attention patterns, focusing computational resources where they matter most. During inference, Mamba-3 maintains constant memory usage regardless of sequence length.

Performance Benchmarks Across Multiple Domains

Recent evaluations demonstrate Mamba-3's capabilities across multiple domains. In language modeling tasks, the architecture achieves perplexity scores comparable to large transformer models while training 2.5x faster. For genomic sequence analysis, researchers report accuracy improvements of 8-12% over previous state space models.

The model excels particularly in tasks requiring long-range reasoning. Audio processing applications show reduced latency and improved quality in speech recognition systems.

Time series forecasting benefits from Mamba-3's ability to capture seasonal patterns and irregular trends simultaneously. These diverse applications showcase the model's versatility and efficiency.

What Are the Practical Applications of Mamba-3?

Mamba-3's efficiency opens doors to applications previously considered impractical. Healthcare organizations are exploring the model for analyzing patient medical histories spanning decades. The architecture processes longitudinal health records without truncating historical data, enabling more accurate diagnostic predictions.

Financial institutions leverage Mamba-3 for real-time market analysis. The model ingests continuous data streams from multiple sources, identifying patterns and anomalies with minimal latency. Trading algorithms built on this architecture respond to market conditions faster than transformer-based systems.

Industry Adoption Trends and Results

Early adopters report significant operational benefits. A major cloud provider reduced inference costs by 45% after migrating certain workloads to Mamba-3-based models.

Content recommendation systems show improved user engagement metrics, with click-through rates increasing by 15-20%. The architecture proves particularly valuable for edge computing scenarios.

Mobile applications benefit from reduced model sizes without sacrificing accuracy. Battery life improves due to lower computational requirements during on-device inference.

How Can You Implement Mamba-3 in Your Projects?

Integrating Mamba-3 requires understanding its unique architectural requirements. The model works best with continuous data streams or naturally sequential information. Developers should assess whether their use case involves long-range dependencies that transformers struggle to capture efficiently.

Training Mamba-3 models demands different hyperparameter configurations than transformer architectures. Learning rates typically need adjustment, and batch sizes can increase due to reduced memory consumption. Most implementations benefit from mixed-precision training to maximize throughput.

Implementation Considerations for Success

Successful deployment requires attention to several factors:

- Data preprocessing: Ensure sequences maintain temporal ordering and consistent formatting

- Hardware selection: GPUs with high memory bandwidth provide optimal performance

- Batch configuration: Larger batches improve efficiency without stability issues

- Monitoring setup: Track state evolution to identify potential numerical instabilities

Developers should start with pre-trained checkpoints when available. Fine-tuning Mamba-3 on domain-specific data typically requires fewer epochs than training from scratch. Transfer learning approaches work effectively across related tasks.

What Challenges Does Mamba-3 Still Face?

Despite its advantages, Mamba-3 confronts certain limitations. The model occasionally struggles with tasks requiring explicit positional reasoning, such as counting or precise location identification.

Researchers continue working on hybrid architectures that combine state space models with targeted attention mechanisms. Training stability remains sensitive to initialization strategies.

Poor parameter initialization can lead to gradient flow issues during early training stages. The community is developing robust initialization schemes to address these concerns.

Future Development Directions

Ongoing research focuses on several improvement areas. Scientists are exploring multi-modal extensions that process vision and language simultaneously. Preliminary results suggest Mamba-3 architectures can handle image sequences more efficiently than vision transformers.

Another active research direction involves scaling laws. Understanding how Mamba-3 performance improves with model size helps optimize resource allocation. Early findings indicate favorable scaling properties compared to transformer architectures.

How Does Mamba-3 Compare to Transformer Architectures?

The choice between Mamba-3 and transformers depends on specific use case requirements. Transformers excel at tasks requiring global context awareness and parallel processing of entire sequences. Their attention mechanisms provide interpretability through attention weight visualization.

Mamba-3 shines in scenarios involving streaming data, resource constraints, or extremely long sequences. The architecture's linear complexity makes it ideal for real-time applications where latency matters.

Energy efficiency considerations also favor state space models for deployment at scale. The reduced computational overhead translates to lower operational costs and faster inference times.

Hybrid Approaches Combine Both Strengths

Some research teams combine both architectures strategically. These hybrid models use Mamba-3 for initial sequence processing and transformers for final reasoning stages. This approach balances efficiency with the interpretability and flexibility transformers provide.

The optimal architecture selection depends on factors including sequence length, available compute resources, latency requirements, and task complexity. Benchmarking both approaches on representative data remains the most reliable decision-making strategy.

Mamba-3 Represents the Future of Efficient Sequence Modeling

Mamba-3 represents a significant advancement in efficient sequence modeling. Its linear complexity and reduced memory requirements make previously impractical applications feasible.

While transformers remain dominant for many tasks, state space models offer compelling alternatives for long-sequence processing and resource-constrained environments. The architecture's continued evolution promises further improvements in performance and applicability.

Continue learning: Next, explore leadership skills for constant change: internal mastery

As the AI community refines training techniques and discovers new use cases, Mamba-3 will likely play an increasingly important role in production systems. Organizations seeking to optimize inference costs while maintaining model quality should seriously evaluate this emerging technology.

Related Articles

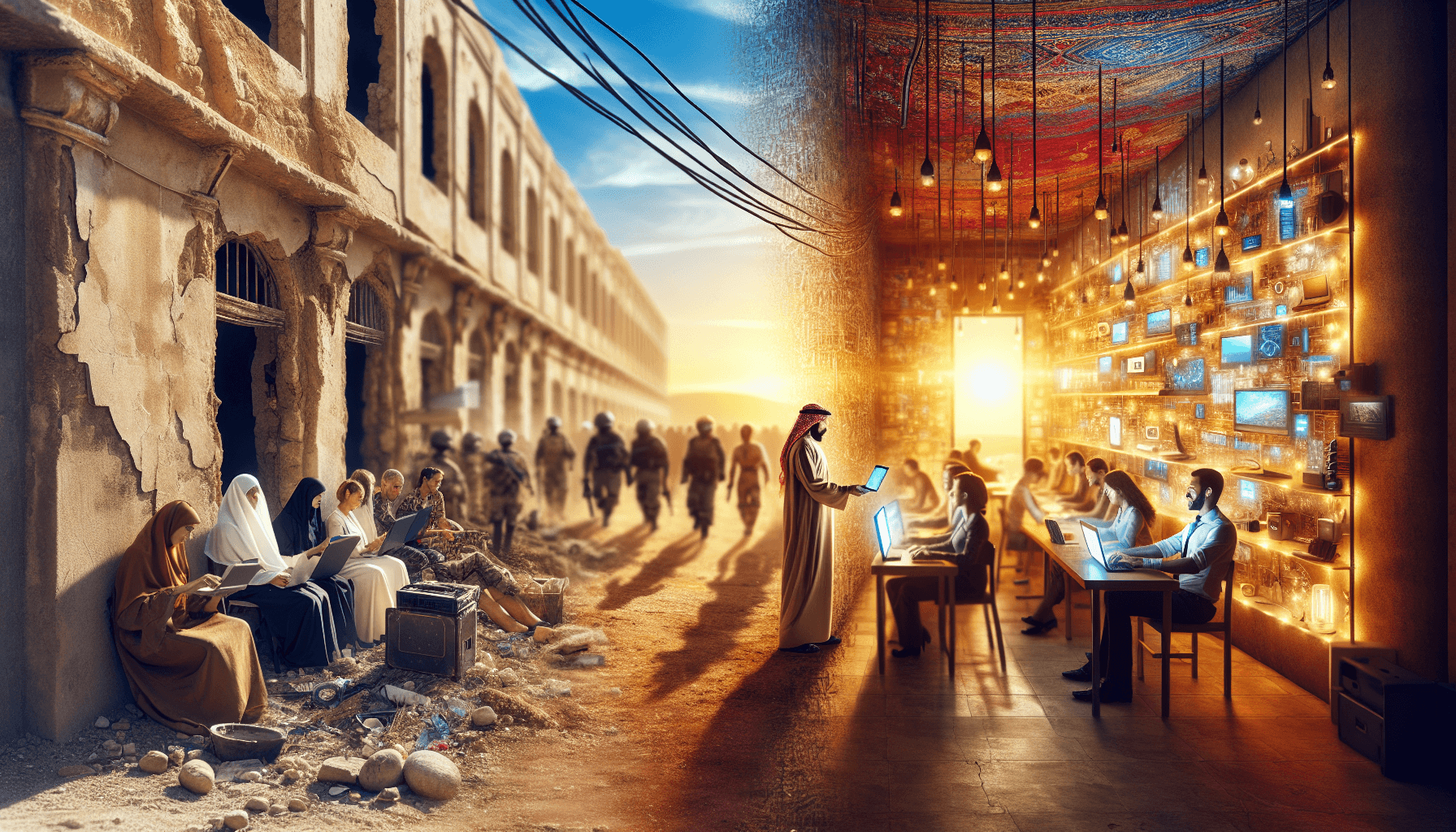

Transforming Gaza: From Conflict Zone to Tech Hub

A leaked plan from the Trump administration reveals a bold strategy to turn Gaza into a thriving high-tech hub. Discover the potential.

Sep 3, 2025

Gaza's Tech Revolution: Trump's Bold High-Tech Vision

A leaked plan from the Trump administration unveils a vision to make Gaza a high-tech hub, focusing on AI, cybersecurity, and digital innovation.

Sep 3, 2025

Tech's Role in Tracking Indonesian Protest Dynamics

A deep dive into the technological aspects of Indonesian protests, highlighting the role of social media, AI, and cybersecurity in modern civil unrest.

Sep 2, 2025