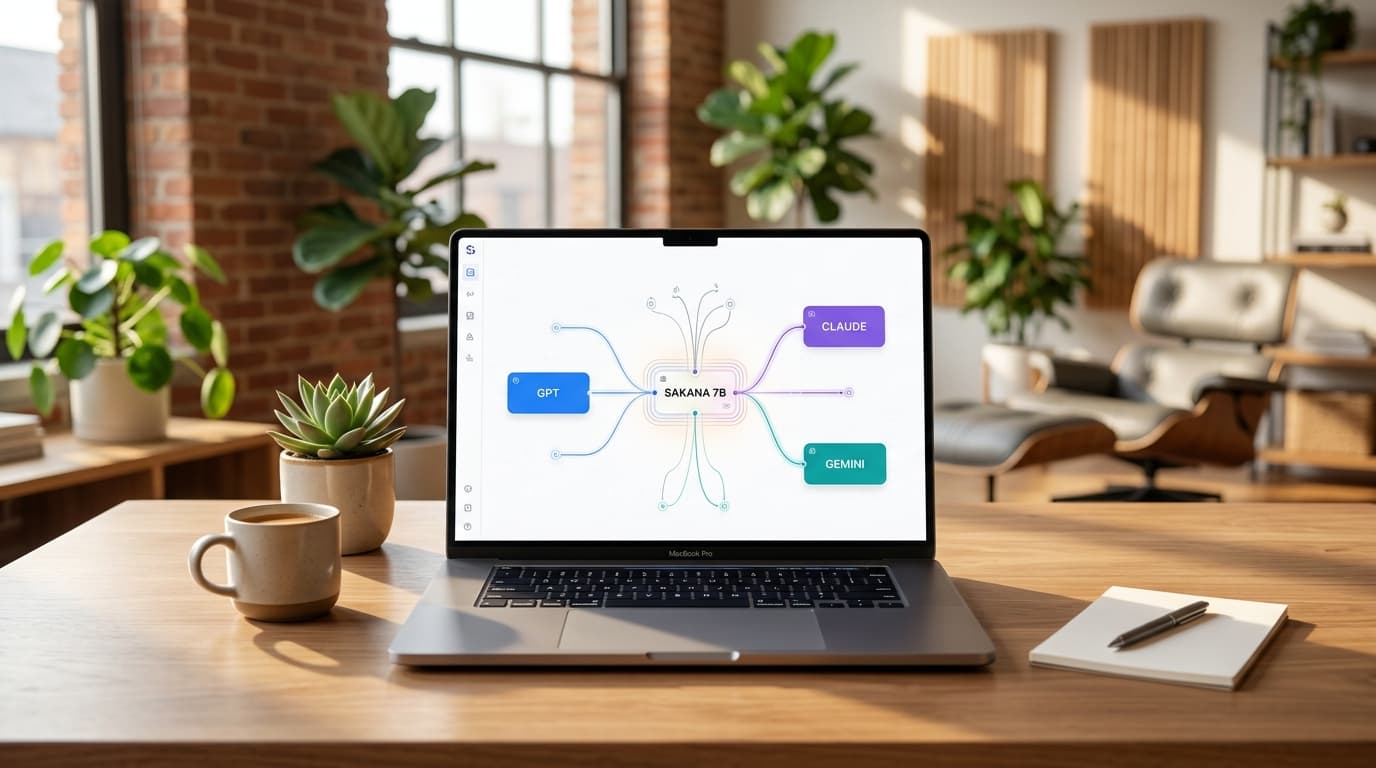

How Sakana's 7B Model Orchestrates GPT, Claude & Gemini

Hardcoded AI pipelines break when query patterns shift. Sakana AI's RL Conductor solves this with a 7B model that dynamically orchestrates GPT, Claude, and Gemini, achieving breakthrough results.

The Breaking Point of Hardcoded AI Pipelines

Learn more about quantum device maps earth's magnetic field from space

Every carefully constructed LangChain pipeline your engineering team builds starts breaking the moment query distribution shifts. And it always shifts.

This limitation has plagued enterprise AI deployments for years, forcing developers into an endless cycle of manual adjustments and patch fixes. Sakana AI identified this bottleneck and engineered a solution that changes how we think about AI orchestration.

Their RL Conductor, a compact 7-billion parameter model, uses reinforcement learning to automatically coordinate multiple large language models including GPT, Claude, and Gemini. The results speak volumes: 77.27% average accuracy across challenging benchmarks while using 83% fewer tokens than competing frameworks.

For business leaders investing millions in AI infrastructure, this represents a shift from static automation to dynamic intelligence that adapts without constant human intervention.

Why Do Manual Agentic Frameworks Fail at Scale?

Large language models possess remarkable latent capabilities, but extracting consistent performance remains a significant challenge. Current production systems rely heavily on manually designed agentic workflows that form the backbone of commercial AI products.

These frameworks work until they don't.

Yujin Tang, co-author of Sakana's research paper, explained the exact failure point to VentureBeat: "While using frameworks with hard-coded pipelines like LangChain and Mixture-of-Agents can work well for specific use cases, in production, an inherent bottleneck arises when targeting domains with large user bases with very heterogeneous demands."

What Is the Specialization Problem?

No single model dominates across all tasks. Different LLMs are fine-tuned for distinct domains, creating a specialization landscape that manual systems cannot navigate efficiently.

Scientific reasoning models excel at research queries but struggle with code. Code generation specialists produce superior implementations but lack planning capabilities. Mathematical logic models solve complex equations but falter on creative tasks.

High-level planning models design strategies but need execution partners. Manually predicting and hardcoding the ideal model combination for every query becomes practically impossible at enterprise scale.

Tang noted that real-world generalization "inherently necessitates going beyond human-hardcoded designs."

For a deep dive on virtual wings reshape the brain: 25 people learned to fly, see our full guide

How Does RL Conductor Orchestrate Multiple LLMs?

The RL Conductor operates like a skilled orchestra conductor, dividing complex problems into targeted subtasks and delegating them to the most suitable expert in its worker pool. Instead of following fixed code or static routing rules, it generates customized workflows for each unique input.

For a deep dive on dirtyfrag: universal linux lpe exploit explained, see our full guide

For every workflow step, the Conductor performs three critical functions. First, it generates a natural language instruction for a specific aspect of the task. Second, it assigns the optimal agent to execute that subtask.

Third, it defines an "access list" that determines which previous subtasks and agent responses are included in that worker's context.

How Does the Conductor Learn Without Rules?

The breakthrough lies in how the Conductor acquires its orchestration capabilities. The model learns through reinforcement learning and reward maximization rather than human programming.

During training, it receives a task, a pool of workers, and a reward signal based on answer correctness and output format. Through trial-and-error RL algorithms, the model organically discovers which instruction combinations and communication structures yield the highest rewards.

It automatically adopts advanced strategies including targeted prompt engineering, iterative refinement, and meta-prompt optimization without any developer hardcoding the process. This natural language approach enables the Conductor to build flexible workflows tailored to each problem.

It constructs simple sequential chains for straightforward tasks, parallel tree structures for complex analysis, or recursive loops for iterative refinement depending on demand.

What Do the Benchmark Numbers Reveal?

Sakana researchers fine-tuned the 7-billion parameter Qwen2.5-7B model as their Conductor. During training, it designed agentic workflows of up to five steps using a worker pool containing seven models: three closed-source giants (Gemini 2.5 Pro, Claude Sonnet 4, and GPT-5) and four open-source alternatives including DeepSeek-R1-Distill-Qwen-32B.

The evaluation compared the Conductor against individual frontier models, self-reflection agents, and state-of-the-art multi-agent frameworks like MASRouter and Mixture-of-Agents. The small 7B Conductor established new performance standards across all metrics.

What Results Did the Conductor Achieve?

The Conductor achieved 77.27% average accuracy across all benchmarks. This included 93.3% on AIME25 mathematical reasoning, 87.5% on GPQA-Diamond scientific questions, and 83.93% on LiveCodeBench coding challenges.

Efficiency metrics proved equally impressive. While baseline frameworks like Mixture-of-Agents consumed 11,203 tokens per question, the Conductor used just 1,820 tokens on average.

It accomplished this performance using only three workflow steps per task, demonstrating both speed and cost advantages.

How Does the Conductor Select Models Strategically?

Experimental analysis revealed sophisticated orchestration patterns the Conductor learned automatically. For simple factual queries, it solved problems in a single step or basic two-agent setup.

Complex coding problems triggered extensive workflows involving up to four agents with dedicated planning, implementation, and verification phases. The Conductor discovered that frontier models possess complementary strengths.

On coding benchmarks, it frequently assigned Gemini 2.5 Pro and Claude Sonnet 4 as high-level planners, bringing in GPT-5 only for final code optimization. In some cases, it completely delegated the planning process to Gemini 2.5 Pro, allowing that model to dictate subtasks for the entire pool.

How Does Sakana Fugu Turn Research Into Revenue?

While the 7B research model remains exploratory and unavailable publicly, Sakana AI has productized the Conductor framework as Sakana Fugu. Now in beta, Fugu operates as a multi-agent orchestration system accessible through a standard OpenAI-compatible API.

Tang identified Fugu's target market as "industries where AI adoption has yet to bring large productivity gains due to the generalization limitations of current hard-coded pipelines, such as finance and defense." These sectors face heterogeneous demands that break traditional frameworks.

What Are the Two Fugu Variants?

Sakana released two Fugu variants addressing distinct enterprise requirements. Fugu Mini is optimized for low-latency operations where response speed drives user experience. Fugu Ultra is designed for maximum performance on demanding workloads requiring deep reasoning.

For enterprise developers, Fugu eliminates the complexity of managing multiple API keys or manually routing tasks across different vendors. The system handles complex collaboration topologies and role assignments automatically behind a single API interface.

Where Does Sakana Use Conductor Technology?

Sakana AI uses Conductor technology internally across practical enterprise applications. Tang noted deployments in "software development, deep research, strategy development, and even visual tasks like slide generation."

These use cases demonstrate the framework's versatility beyond mathematical and coding benchmarks.

When Should Enterprises Deploy RL Orchestration?

The decision to implement RL-orchestration versus traditional routing often comes down to engineering resource allocation. Tang explained: "We believe the absolute sweet spot comes whenever users and their teams feel they are spending a disproportionate amount of time guiding their underlying agents."

However, he cautioned against universal application. "It's hard to beat the economic proposition of a local model running directly on the user's machine for simple queries."

The framework delivers maximum value when query complexity and diversity justify the orchestration overhead.

How Does Sakana Address Governance Concerns?

Enterprise architects often raise concerns about autonomous agents generating invisible workflows. Tang pointed out that interpretability risks mirror those of current top-tier closed APIs with hidden reasoning traces.

Sakana manages these risks through established guardrails designed to minimize hallucinations and maintain output quality. The governance model treats the Conductor as a managed service rather than an autonomous black box, giving enterprises control over worker model selection and workflow constraints.

What Does Cross-Modal Orchestration Mean for AI's Future?

As specialized open-source and closed-source AI models proliferate, static hardcoded pipelines face inevitable obsolescence. The diversity of model capabilities will only increase, making dynamic orchestration not just advantageous but necessary for competitive AI deployments.

Tang sees expansion beyond text and code environments. "There is indeed a large potential to fill this gap with cross-modal Conductor frameworks becoming the foundation for more autonomous, self-coordinating physical AI systems."

This vision extends orchestration principles to robotics, industrial automation, and embodied AI applications. The implications for enterprise strategy are clear.

Organizations investing in AI infrastructure today must consider orchestration flexibility as a core requirement, not an optional enhancement. The ability to dynamically leverage emerging models without rebuilding pipelines will separate adaptive organizations from those trapped in technical debt.

What Should Business Leaders Remember?

Sakana AI's RL Conductor demonstrates that small, specialized models can outperform manual engineering when trained with the right objectives. The 7B parameter model achieves state-of-the-art results while using 83% fewer tokens than competing frameworks, proving that intelligence lies in coordination rather than scale alone.

For enterprises struggling with rigid AI pipelines that break under shifting demand, RL-orchestration offers a path forward. The technology automatically adapts to heterogeneous queries, leverages complementary model strengths, and reduces both cost and latency compared to traditional multi-agent systems.

Continue learning: Next, explore google's video streaming strategy backfires: what went wrong

Sakana Fugu's beta release brings this research into production environments, targeting finance, defense, and other sectors where AI adoption has stalled due to generalization limitations. As the model landscape continues fragmenting into specialized experts, dynamic orchestration will transition from competitive advantage to table stakes for serious AI deployments.

Related Articles

Acer's New Chromebook: A Game-Changer for Businesses?

Acer's Chromebook Plus Spin 514 combines AI and potent computing, offering businesses a glimpse into the future of work.

Sep 5, 2025

AI Revolution: Making Autonomous Vehicles 99% Safer

Explore the groundbreaking AI technology that promises to prevent 99% of accidents in autonomous vehicles, marking a new era in road safety.

Sep 6, 2025

AI in Cybersecurity: A Surge in Software Spending

Software commands 40% of cybersecurity budgets, with AI and ML defenses critical in the fast-paced battle against cyber threats.

Sep 7, 2025