- Home

- Technology

- Anthropic Bug Causes $200 Overcharge, Refuses Refund

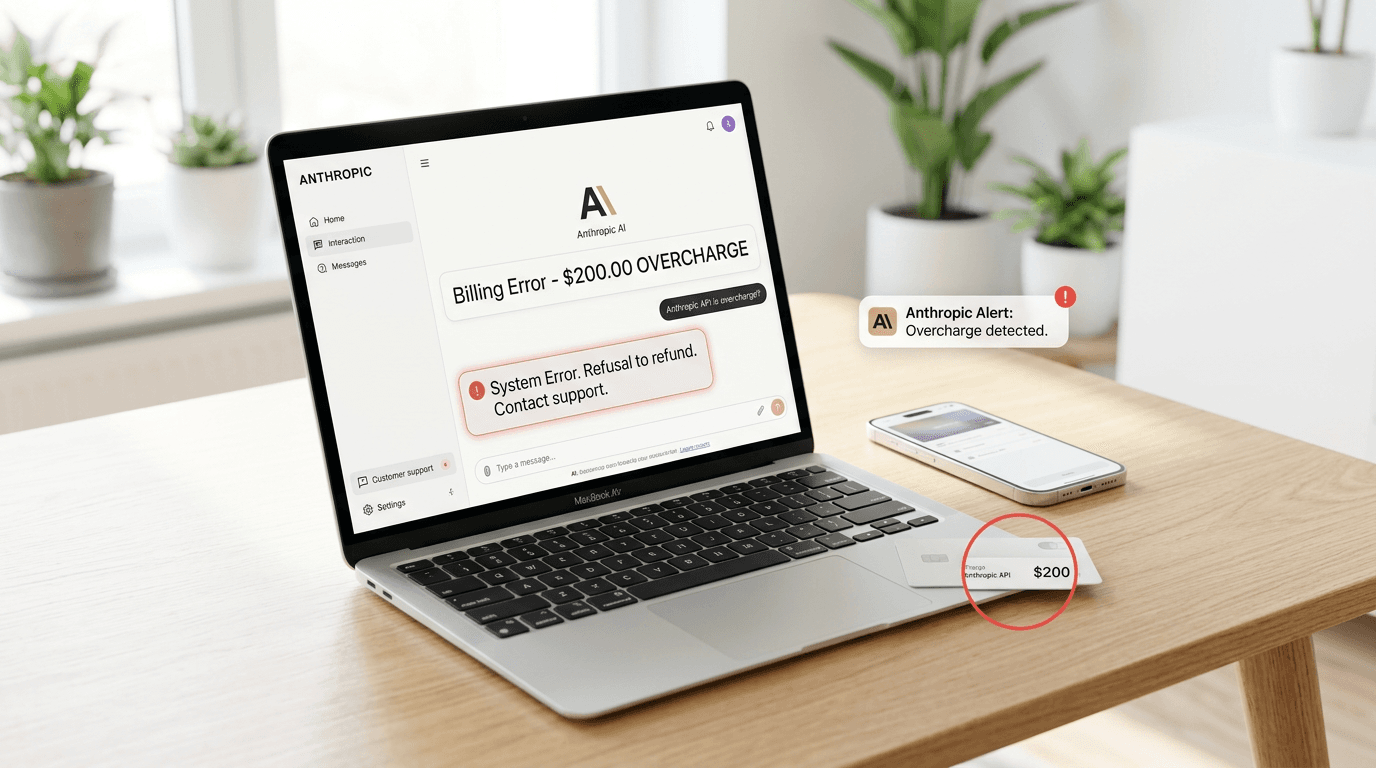

Anthropic Bug Causes $200 Overcharge, Refuses Refund

A system bug caused $200 in erroneous charges on HERMES.md, but Anthropic declined to issue a refund. This incident raises serious questions about customer protection in AI services.

What Happens When AI Companies Refuse Refunds for Billing Errors?

Learn more about zed 1.0: the lightning-fast code editor redefining develo...

When AI companies make billing errors, customers expect swift resolution and fair treatment. A recent incident involving Anthropic's HERMES.md service has sparked debate about accountability in the AI industry after a system bug triggered a $200 overcharge that the company declined to refund. This case highlights growing concerns about customer protection as AI services become increasingly essential to business operations.

What Happened in the HERMES.md Billing Incident?

The controversy centers on HERMES.md, a service utilizing Anthropic's Claude AI API. Users reported unexpected charges appearing on their accounts, with one documented case showing a $200 billing discrepancy directly attributed to a system malfunction. The affected customer contacted Anthropic's support team expecting a straightforward resolution, only to face resistance.

Anthropic acknowledged the technical glitch but maintained their position against issuing refunds. This response differs sharply from standard industry practice, where billing errors typically result in immediate credits or reversals. The incident raises questions about terms of service enforcement when companies' own systems fail.

What Caused the Billing Error?

Anthropic has not released comprehensive technical details, but preliminary reports suggest the bug originated in their API metering system. Usage tracking mechanisms apparently recorded duplicate or phantom API calls that never actually occurred. These ghost transactions accumulated charges that customers never authorized through legitimate usage.

API billing systems rely on precise request counting and rate limiting. When these mechanisms malfunction, costs can spiral rapidly given the per-token pricing model most AI services employ. A single miscalculation multiplied across thousands of requests creates substantial financial impact for users operating on tight budgets.

Why Did Anthropic Refuse the Refund?

For a deep dive on definity embeds ai agents in spark pipelines for real-tim..., see our full guide

Anthropic's refusal appears rooted in their interpretation of service agreements and liability clauses. The company likely cited terms stating users bear responsibility for all API activity under their accounts. However, this position ignores the fundamental principle that customers should not pay for services they never received due to vendor errors.

Industry observers note this approach contradicts best practices established by major cloud providers. Amazon Web Services, Google Cloud, and Microsoft Azure routinely credit accounts when their systems generate erroneous charges. These companies recognize that maintaining customer trust requires acknowledging and correcting their mistakes.

For a deep dive on bugs rust won't catch: beyond memory safety guarantees, see our full guide

What Are Your Rights in AI Service Agreements?

This incident exposes vulnerabilities in how AI service contracts allocate risk between providers and users. Most terms of service heavily favor companies, limiting liability even when technical failures originate entirely on the vendor side. Customers often lack meaningful recourse beyond disputing charges through payment processors.

How Can You Protect Yourself from AI Billing Errors?

Set strict spending limits through API dashboards and payment provider controls. Monitor usage metrics daily to catch anomalies before bills arrive. Document all interactions with support teams including timestamps and ticket numbers.

Review terms of service specifically regarding dispute resolution and liability caps. Consider credit card protections that allow chargebacks for services not rendered.

Small businesses and independent developers face particular risk because they lack the negotiating power to demand custom contracts. Enterprise clients typically secure service level agreements with defined remediation processes, but individual users accept standard terms with minimal protection.

How Do Other AI Providers Handle Billing Disputes?

OpenAI, Anthropic's primary competitor, has faced similar billing controversies but generally responds with account credits. Their support documentation explicitly addresses billing errors and outlines refund procedures. Google's AI services include built-in budget alerts and automatic spending caps to prevent runaway charges.

The contrast in customer service approaches reveals different philosophical stances on user relationships. Companies viewing customers as partners tend to absorb costs from their own errors. Those treating users primarily as revenue sources may prioritize short-term financial protection over long-term reputation management.

What Industry Standards Should Apply to AI Billing?

Consumer protection advocates argue AI services should follow established precedents from cloud computing and SaaS industries. These include mandatory billing transparency, dispute resolution mechanisms, and automatic credits for verified system errors. Regulatory frameworks may eventually codify these expectations as AI services become infrastructure-critical.

What Should You Do If You Face Similar Billing Issues?

Customers experiencing billing discrepancies with AI services should act quickly and strategically. Gather comprehensive evidence including usage logs, billing statements, and any system status notifications. Screenshot everything before data potentially disappears or gets overwritten.

Contact support through multiple channels simultaneously: email, in-app messaging, and social media. Public visibility sometimes accelerates resolution when private channels stall. Clearly state the issue, reference specific dollar amounts, and request explicit confirmation of next steps.

What Are Your Escalation Options Beyond Customer Support?

If initial support contacts fail, consider these escalation paths. File payment processor disputes for chargebacks on services not received. Use social media pressure, as public posts often prompt executive attention.

Share your experience in industry forums where community support can reveal patterns and solutions. File complaints with consumer protection agencies like the FTC or state attorneys general. Consider small claims court for amounts under jurisdictional limits.

Document your attempts to resolve the issue amicably before escalating. This record strengthens your position if disputes reach formal proceedings or regulatory review.

What Does This Mean for AI Industry Trust?

Anthropic's handling of this incident matters beyond the immediate financial impact. As AI services integrate deeper into business workflows, reliability encompasses both technical performance and billing accuracy. Companies that fail to address their mistakes erode the trust necessary for customers to depend on their platforms.

The AI industry faces increasing scrutiny from regulators concerned about market concentration and consumer protection. High-profile billing disputes provide ammunition for arguments that self-regulation has failed. Stricter oversight may emerge if companies cannot demonstrate fair treatment of customers when problems arise.

What Should HERMES.md Users Do Now?

Services built on third-party AI APIs inherit both technical and reputational risks from their providers. HERMES.md users should evaluate whether Anthropic's customer service approach aligns with their risk tolerance. Alternative providers may offer comparable capabilities with more responsive support structures.

This incident also highlights the importance of architectural choices in AI-dependent applications. Building in fallbacks, monitoring systems, and cost controls protects against both technical failures and billing anomalies. Defense-in-depth strategies matter as much for financial exposure as security threats.

Why Accountability Matters in the AI Era

The HERMES.md billing controversy demonstrates that AI companies must meet the same customer service standards as any technology provider. Refusing refunds for documented system errors damages trust and sets concerning precedents. As AI services become essential infrastructure, providers bear responsibility for ensuring billing accuracy and promptly correcting mistakes.

Customers should protect themselves through vigilant monitoring, spending controls, and understanding their rights under service agreements. The industry needs clearer standards for handling billing disputes, preferably through voluntary adoption of best practices before regulatory intervention becomes necessary.

Continue learning: Next, explore court reverses pause on epic games ruling vs apple

Companies that prioritize customer relationships over short-term revenue protection will build sustainable competitive advantages in the evolving AI marketplace. Trust remains the foundation of long-term success in AI services.

Related Articles

AI Tools Reveal Identities of ICE Officers Online

AI's emerging role in unmasking ICE officers spotlights the intersection of technology, privacy, and ethics, sparking a crucial societal debate.

Sep 2, 2025

AI's Role in Unveiling ICE Officers' Identities

AI unmasking ICE officers underscores a shift towards transparent law enforcement, raising questions about privacy and ethics in the digital age.

Sep 2, 2025

AI Unveils ICE Officers: A Tech Perspective

AI's role in unmasking ICE officers highlights debates on privacy, ethics, and the balance between transparency and security in law enforcement.

Sep 2, 2025

Comments

Loading comments...