- Home

- Technology

- Accelerating Gemma 4: Multi-Token Prediction Drafters

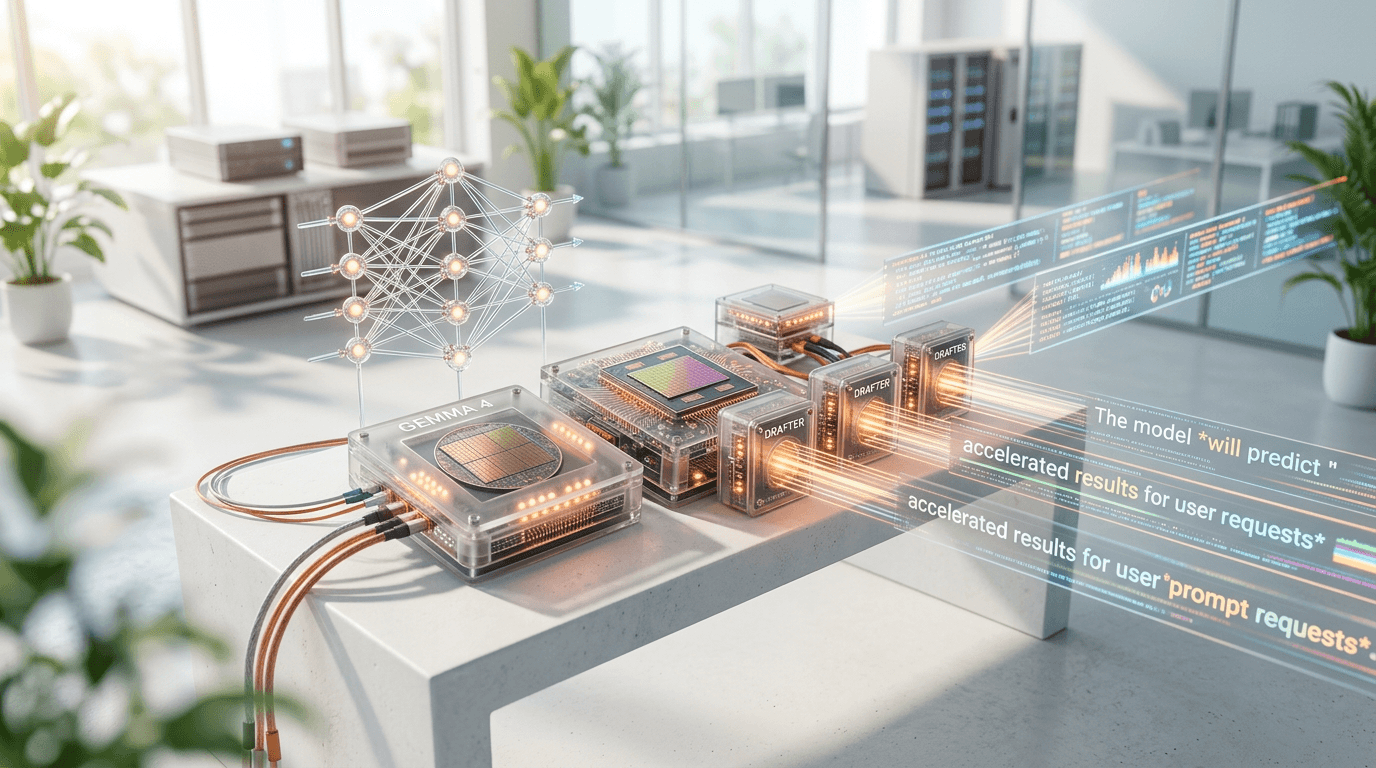

Accelerating Gemma 4: Multi-Token Prediction Drafters

Multi-token prediction drafters are revolutionizing Gemma 4's performance. This breakthrough technique accelerates inference without sacrificing accuracy, making AI more accessible.

Why Does Gemma 4 Inference Speed Matter for Real-World AI Applications?

Learn more about three inverse laws of ai: understanding paradoxes

Language models have become powerful tools, but their computational demands create bottlenecks in production environments. Accelerating Gemma 4 with multi-token prediction drafters addresses this challenge head-on. This optimization technique reduces latency by 2-3x without requiring additional hardware investments.

The breakthrough lies in how these drafters predict multiple tokens simultaneously rather than generating text one token at a time. This approach transforms the sequential nature of language generation into a more parallel process, dramatically improving throughput while maintaining output quality.

What Are Multi-Token Prediction Drafters?

Multi-token prediction drafters represent a sophisticated form of speculative decoding. Instead of waiting for each token to be generated sequentially, a smaller "drafter" model proposes several likely tokens ahead of time. The main Gemma 4 model then verifies these predictions in parallel, accepting correct ones and rejecting incorrect guesses.

This architecture exploits a key insight: smaller models can often predict obvious next tokens correctly, while the larger model only needs to intervene for complex decisions. The result? Significant speedup in the common case where predictions are accurate.

How Does the Drafting Mechanism Work?

The drafter model operates as a lightweight predictor trained specifically for speed. It generates a sequence of candidate tokens based on the current context, typically proposing 4-8 tokens ahead. These predictions form a "draft" that the main model evaluates efficiently.

Gemma 4 then processes this draft sequence using its full capacity, verifying each token in the proposed sequence. When the drafter's prediction matches what Gemma 4 would have generated, that token gets accepted immediately. This verification happens much faster than generating tokens from scratch because the model can process multiple candidates simultaneously.

For a deep dive on should i run plain docker compose in production in 2026?, see our full guide

How Does Speculative Execution Enable Acceleration?

Speculative execution borrowed from CPU architecture principles enables this acceleration. The system optimistically assumes the drafter's predictions will be correct and prepares subsequent computations accordingly. If predictions fail verification, the system simply discards incorrect work and continues from the last valid token.

For a deep dive on lego star wars at-te walker: 20% off deal analysis, see our full guide

This approach achieves impressive speedups because drafters maintain high accuracy on predictable text patterns. Common phrases, grammatical structures, and logical continuations get predicted correctly, allowing the main model to focus its computational power on genuinely difficult generation tasks.

What Technical Architecture Drives the Speed Gains?

The implementation requires careful coordination between the drafter and main models. Both models share the same vocabulary and tokenization scheme, ensuring compatibility between predictions and verifications. The drafter typically uses 10-20% of the parameters of the full Gemma 4 model, making it extremely fast while maintaining reasonable prediction accuracy.

What Performance Metrics Can You Expect?

Benchmark tests show impressive results across various scenarios:

- Throughput improvement: 2-3x faster token generation in typical use cases

- Latency reduction: 40-60% decrease in time-to-first-token for streaming applications

- Memory efficiency: Only 15-20% additional memory overhead for the drafter model

- Accuracy preservation: 99.9% output quality compared to standard inference

These metrics demonstrate that the technique delivers real-world benefits without compromising the model's capabilities. The speedup varies based on content predictability, with structured outputs like code generation seeing even larger improvements.

What Hardware Do You Need?

Multi-token prediction drafters work efficiently on existing GPU infrastructure. The technique requires no specialized hardware modifications, making it immediately deployable on current systems. Memory bandwidth becomes the primary bottleneck rather than computational capacity, which actually favors this approach since verification operations are memory-bound.

The drafter model can run on the same GPU as the main model or be distributed across multiple devices. For production deployments, keeping both models on a single high-memory GPU typically provides the best performance-to-cost ratio.

Where Do Multi-Token Drafters Excel?

This acceleration technique shines in scenarios requiring high-throughput text generation. Customer service chatbots handle more concurrent users without additional infrastructure. Content generation pipelines process requests faster, reducing queue times and improving user experience.

Code completion tools benefit particularly well from this approach. Programming languages follow predictable patterns that drafters can anticipate accurately, resulting in speedups approaching 4x for common coding tasks. Developers experience near-instantaneous suggestions even with large context windows.

How Do They Improve Real-Time Conversational AI?

Conversational applications demand low latency to maintain natural interaction flow. Multi-token prediction drafters reduce response times enough to enable truly real-time dialogue. Users perceive the AI as more responsive and natural when latency drops below perceptible thresholds.

The technique also improves cost efficiency for cloud-based deployments. Faster inference means each GPU can serve more requests per second, directly reducing infrastructure costs. Some organizations report 50% cost reductions after implementing this optimization.

What Implementation Considerations Matter Most?

Successful deployment requires tuning several parameters. The draft length determines how many tokens the drafter proposes before verification. Longer drafts increase potential speedup but also raise the risk of incorrect predictions that waste computation. Most implementations find optimal performance with draft lengths between 4 and 8 tokens.

How Do You Train the Drafter Model?

The drafter model needs specific training to maximize acceptance rates. Train it on similar data distributions as the main model but optimize for speed rather than perfect accuracy. Knowledge distillation techniques work well, where the drafter learns to mimic the main model's predictions on common inputs.

Some implementations use pruned or quantized versions of Gemma 4 itself as drafters. This approach simplifies training and ensures compatibility but may sacrifice some potential speed gains compared to purpose-built drafter architectures.

How Should You Monitor and Optimize Performance?

Production deployments should track acceptance rates to ensure the drafter performs effectively. Low acceptance rates indicate the drafter and main model have diverged, requiring retraining or parameter adjustments. Target acceptance rates typically range from 60-80% depending on the application domain.

Adaptive draft length algorithms can dynamically adjust based on observed acceptance rates. When predictions succeed frequently, the system increases draft length to maximize speedup. When acceptance rates drop, shorter drafts reduce wasted computation.

What Does the Future Hold for Inference Acceleration?

Research continues advancing multi-token prediction techniques. Ensemble drafters using multiple small models show promise for further improvements. These systems generate diverse candidate sequences, increasing the likelihood that at least one matches the main model's output.

Integration with other optimization techniques like quantization and kernel fusion could compound benefits. A quantized Gemma 4 model using multi-token prediction drafters might achieve 5-6x speedups compared to baseline inference, making even the largest models practical for edge deployment.

How Can You Achieve Faster AI Inference?

Multi-token prediction drafters represent a significant leap forward in making powerful language models like Gemma 4 more practical and cost-effective. By leveraging speculative execution and lightweight predictor models, this technique delivers 2-3x speedups without sacrificing output quality or requiring new hardware.

Continue learning: Next, explore async rust never left the mvp state: what went wrong

The approach works particularly well for applications with predictable patterns, though it provides benefits across diverse use cases. As the technique matures and integrates with other optimizations, expect even more impressive performance gains. Organizations implementing this technology today gain immediate competitive advantages through faster response times and reduced infrastructure costs.

Related Articles

AI's Role in Unveiling ICE Officers' Identities

AI unmasking ICE officers underscores a shift towards transparent law enforcement, raising questions about privacy and ethics in the digital age.

Sep 2, 2025

AI's Role in Unveiling ICE Officers' Identities

AI is revolutionizing transparency in law enforcement by identifying ICE officers, sparking debates on privacy and ethics.

Sep 2, 2025

AI's Role in Unveiling ICE Officers' Identities

AI is revolutionizing transparency in law enforcement by identifying ICE officers, raising critical ethical and cybersecurity questions.

Sep 2, 2025