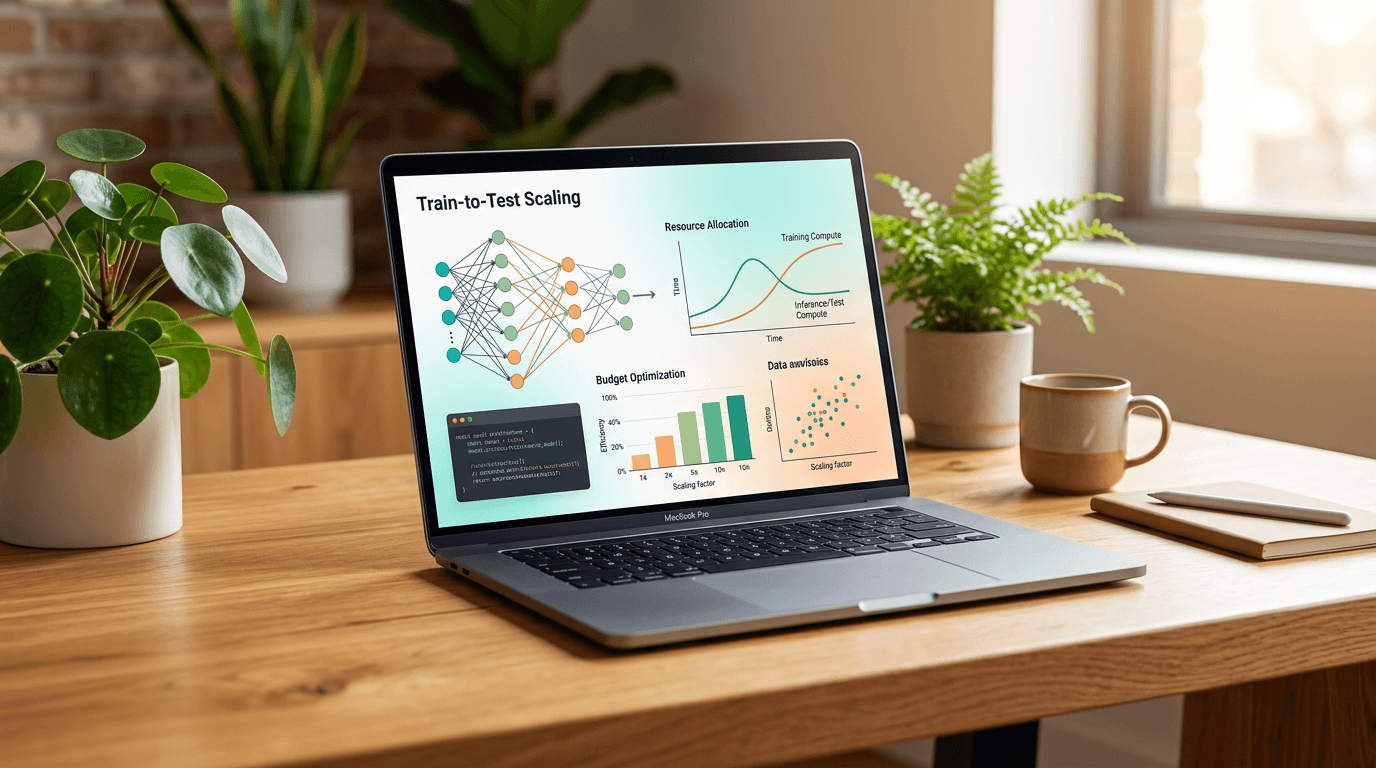

Train-to-Test Scaling: Optimize AI Compute Budgets

Train-to-Test scaling laws revolutionize AI economics by jointly optimizing model size, training data, and inference costs for reasoning-heavy applications.

Understanding Train-to-Test Scaling for AI Compute Optimization

Learn more about airline industry shakeup: jet fuel costs force change

Enterprise AI deployments face a critical challenge: traditional model training guidelines ignore inference costs. For businesses building reasoning-intensive applications like coding assistants or complex problem-solving tools, this oversight can destroy ROI faster than you can say "token budget."

Researchers at University of Wisconsin-Madison and Stanford University have introduced Train-to-Test (T2) scaling laws, a framework that changes everything. Instead of optimizing training and inference separately, T2 treats them as a unified equation. The result? Smaller models trained on massive datasets that outperform their larger counterparts while keeping per-query costs manageable.

Why Do Traditional Scaling Laws Fail Modern AI Applications?

The industry has operated under two separate scaling philosophies that never talked to each other. Pretraining scaling laws, like the famous Chinchilla rule, dictate that you should use roughly 20 training tokens for every model parameter. Test-time scaling laws guide inference decisions, like generating multiple reasoning samples to solve complex problems.

This separation creates a fundamental problem for real-world deployments. When you build agentic workflows that require repeated sampling at inference, large models become prohibitively expensive.

Nicholas Roberts, lead author of the research, puts it bluntly: "The inference stack breaks down when each individual inference call is expensive."

What Creates the Mathematical Language Barrier?

Pretraining and test-time scaling speak different mathematical languages. During training, developers measure performance using "loss," a continuous metric tracking prediction errors.

At deployment, they evaluate models using downstream metrics like pass@k, which measures the probability of getting at least one correct answer across multiple attempts. This disconnect meant no formula existed to jointly optimize model size, training data volume, and test-time inference budgets.

For a deep dive on 5+ things to know about the next mac studio in 2024, see our full guide

Until now.

How Do Train-to-Test Scaling Laws Work?

For a deep dive on why japan has such good railways: tech & innovation, see our full guide

T2 scaling laws introduce a unified framework that predicts reasoning performance using three variables as a single equation:

- Model size (N): The number of parameters in your model

- Training tokens (D): The volume of data used during training

- Inference samples (k): The number of reasoning attempts at deployment

The framework combines pretraining costs (6ND) and inference costs (2Nk) into one optimization formula. This allows businesses to see the true cost-performance tradeoff across the entire model lifecycle.

What Are the Two Approaches to Modeling Performance?

The researchers tested two mathematical approaches. The first modifies the Chinchilla loss equation by adding the inference variable (k), showing how increased test-time compute reduces overall error rates.

The second directly models pass@k accuracy, telling developers the probability their application will solve a problem given a specific compute budget. Both approaches reached the same conclusion: the compute-optimal frontier shifts dramatically away from standard scaling rules.

What Does the Research Reveal About Optimal Model Design?

The research team built an extensive testbed of over 100 language models, ranging from 5 million to 901 million parameters. They trained 21 new, heavily overtrained checkpoints from scratch and benchmarked them across eight diverse tasks including SciQ, OpenBookQA, and synthetic reasoning challenges.

The results were clear: heavily overtrained small models consistently outperformed larger, Chinchilla-optimal models when test-time sampling costs were factored in. The optimal choice is a model significantly smaller and trained on vastly more data than the traditional 20-tokens-per-parameter rule suggests.

When Should Businesses Apply This Framework?

T2 scaling laws are not universal. Roberts clarifies that this approach is highly specialized: "I imagine that you would not see as much of a benefit for knowledge-heavy applications, such as chat models."

Instead, T2 is tailored to reasoning-heavy applications like coding, mathematical problem-solving, and complex decision-making tasks where repeated sampling is the primary test-time scaling method.

How Can Enterprise Developers Implement This Practically?

The technical barrier to implementing T2 scaling is surprisingly low. Roberts confirms: "Nothing fancy is required to perform test-time scaling with our current models." Developers can integrate standard infrastructure like KV caching to make the sampling process more efficient.

KV caching stores previously processed context so the model does not have to re-read the initial prompt for every new reasoning sample. This simple optimization makes repeated sampling dramatically more cost-effective.

What Trade-offs and Limitations Should You Consider?

Extreme overtraining comes with practical considerations:

- Fine-tuning challenges: Overtrained models can be harder to fine-tune, though the research found this effect was not strong enough to shift the optimal strategy back toward Chinchilla scaling

- Data wall concerns: Pushing overtraining to the extreme may exhaust available high-quality training data

- Application specificity: The approach works best for reasoning tasks, not knowledge-heavy applications

- Infrastructure requirements: You need sufficient compute to handle multiple inference samples efficiently

What Is the Business Impact of Democratizing AI Reasoning?

T2 scaling laws serve as an equalizing force in the AI industry. The high price of frontier models creates barriers for scaling agentic applications that rely on reasoning capabilities.

This research provides a proven blueprint for maximizing ROI without requiring massive compute budgets. For enterprise AI application developers training their own models, the implications are significant.

You can achieve state-of-the-art reasoning performance while keeping per-query inference costs manageable within real-world deployment budgets.

How Does T2 Optimize ROI Strategy?

The T2 framework fundamentally changes the economics of AI deployment. Instead of spending huge amounts on frontier models, businesses can:

- Train smaller models on larger datasets

- Allocate saved compute to generate multiple inference samples

- Achieve stronger performance on complex tasks

- Maintain manageable per-query costs at scale

The research team plans to open-source their checkpoints and code, allowing enterprises to plug in their own data and test the scaling behavior immediately.

What Should Business Leaders Take Away?

Train-to-Test scaling laws prove that AI reasoning does not necessarily require massive compute budgets. Roberts summarizes the paradigm shift: "T2 fundamentally changes who gets to build strong reasoning models. You might not need massive compute budgets to get state-of-the-art reasoning. Instead, you need good data and smart allocation of your training and inference budget."

For businesses deploying reasoning-intensive AI applications, this framework offers a clear path forward. By jointly optimizing model size, training data, and inference samples, you maximize performance while controlling costs.

The days of treating training and inference as separate optimization problems are over. The compute-optimal strategy is clear: train compact models on extensive datasets, then leverage the computational savings to run multiple reasoning samples at inference.

Continue learning: Next, explore hydrographnet boosts watershed predictions in sparse data

This approach delivers superior results while keeping AI deployment economically viable at enterprise scale.

Related Articles

Acer's New Chromebook: A Game-Changer for Businesses?

Acer's Chromebook Plus Spin 514 combines AI and potent computing, offering businesses a glimpse into the future of work.

Sep 5, 2025

Sony's Paywall Strategy: Xperia Feature Sparks Debate

Sony's recent strategy to lock a key Xperia feature behind a subscription has sparked a debate on innovation versus monetization.

Sep 4, 2025

AI Revolution: Making Autonomous Vehicles 99% Safer

Explore the groundbreaking AI technology that promises to prevent 99% of accidents in autonomous vehicles, marking a new era in road safety.

Sep 6, 2025

Comments

Loading comments...