- Home

- Technology

- Kitten TTS Models: 3 New Releases Under 25MB Each

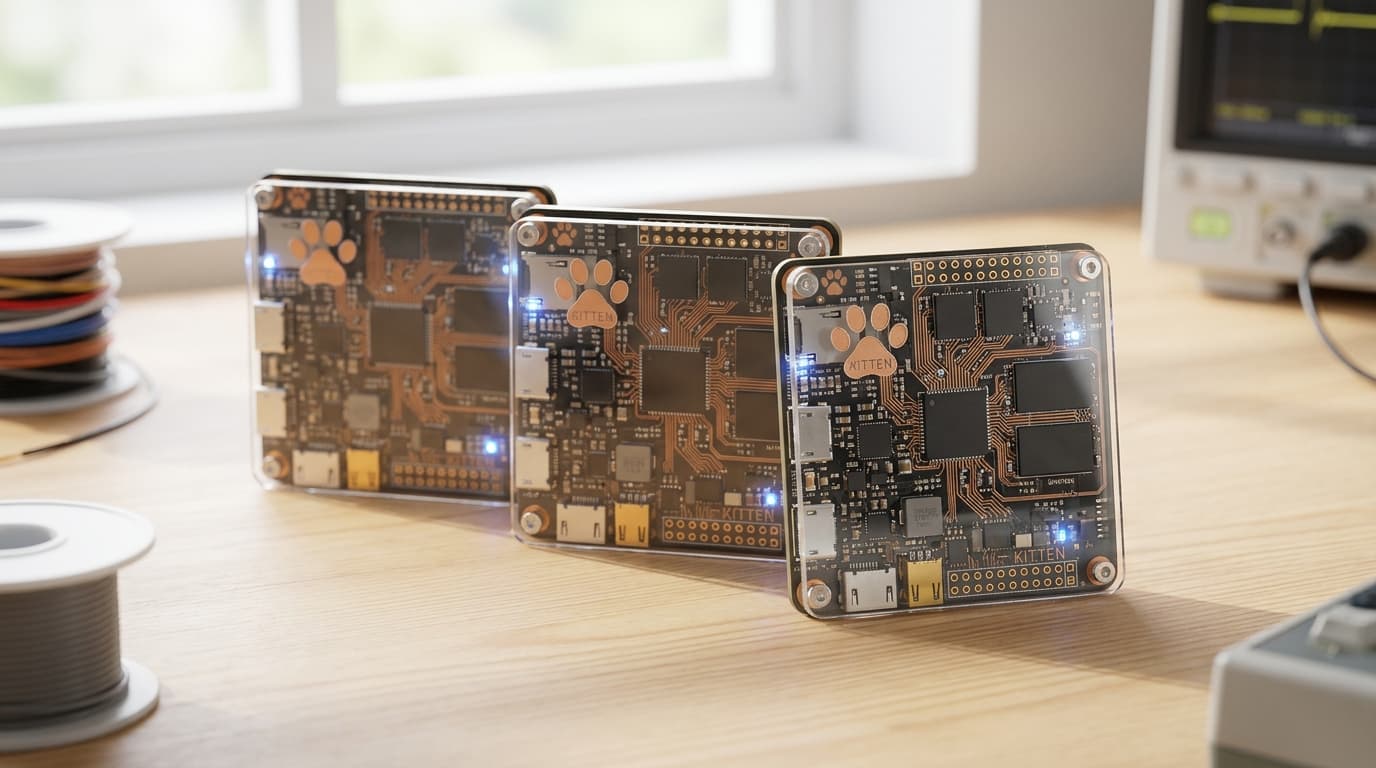

Kitten TTS Models: 3 New Releases Under 25MB Each

Three new Kitten TTS models have arrived, with the smallest weighing less than 25MB. These compact text-to-speech solutions bring high-quality voice synthesis to resource-constrained devices.

Lightweight TTS Revolution: Can Speech Synthesis Run on 25MB?

Learn more about astral to join openai: python tooling meets ai innovation

Text-to-speech technology has traditionally demanded substantial computational resources and storage space. The release of three new Kitten TTS models challenges this norm by delivering quality voice synthesis in packages small enough to run on edge devices. The smallest model clocks in at under 25MB, making professional-grade speech generation accessible to developers working with limited hardware.

These compact models represent a significant shift in how we approach speech synthesis for embedded systems, IoT devices, and mobile applications. Traditional TTS engines often require hundreds of megabytes or even gigabytes of storage, placing them out of reach for many use cases.

What Are the New Kitten TTS Models?

Kitten TTS represents a family of neural text-to-speech models optimized for size and efficiency without sacrificing audio quality. The three new releases target different use cases based on available resources and quality requirements. Each model uses advanced compression techniques and architectural optimizations to minimize footprint while maintaining natural-sounding speech output.

The development team focused on creating models that could run inference on CPU-only devices. This design choice eliminates the need for GPU acceleration, broadening the range of compatible hardware. Mobile phones, Raspberry Pi devices, and embedded systems can now handle real-time speech synthesis locally.

How Do the Three Models Compare in Size and Performance?

The three models offer a graduated approach to balancing size against quality:

- Kitten Nano: Under 25MB, optimized for ultra-low-resource environments

- Kitten Lite: Approximately 50MB, balancing size and audio fidelity

- Kitten Standard: Around 100MB, delivering higher quality with reasonable footprint

Each model supports multiple speaking rates and handles various text inputs including punctuation, numbers, and basic formatting. The inference speed varies by hardware but remains fast enough for real-time applications on modern processors.

For a deep dive on tim cook on iphone's future: innovation roadmap unveiled, see our full guide

Why Do Lightweight TTS Models Matter?

The push toward smaller AI models addresses several critical challenges in modern software development. Privacy concerns drive demand for on-device processing that keeps sensitive data local. Network latency issues make cloud-based TTS impractical for real-time applications.

For a deep dive on apple blocks vibe coding app updates: what happened, see our full guide

Cost considerations favor one-time downloads over ongoing API charges. Edge computing continues to gain traction across industries. Smart home devices, automotive systems, and industrial equipment increasingly require voice interfaces that cannot always rely on internet connectivity.

What Applications Benefit from Compact TTS?

The reduced size opens up numerous practical applications:

- Accessibility tools running on older or budget smartphones

- Offline navigation systems providing voice guidance without data connections

- Educational devices for language learning in areas with limited connectivity

- Medical equipment delivering voice alerts and instructions

- IoT devices adding voice feedback without cloud dependencies

Developers working on resource-constrained projects can now integrate voice output without architectural compromises. The models work within the storage and memory limitations of embedded Linux systems and mobile platforms.

How Do Kitten TTS Models Achieve Such Small Size?

The engineering behind these compact models involves several optimization strategies. Neural architecture search identified efficient model structures that minimize parameters while preserving capability. Quantization techniques reduce the precision of model weights from 32-bit floats to 8-bit integers without significant quality degradation.

Knowledge distillation played a crucial role in the development process. Larger, more capable models trained smaller student models to replicate their behavior. This transfer learning approach preserved much of the quality while dramatically reducing model complexity.

What Technical Innovations Enable This Efficiency?

The models use a streamlined vocoder architecture that converts linguistic features into audio waveforms. Traditional vocoders often constitute the bulk of TTS system size. Kitten TTS employs a lightweight vocoder design that maintains naturalness while occupying minimal space.

The text processing pipeline also underwent optimization. Phoneme conversion and prosody prediction use compact lookup tables and simplified neural networks. These components handle text normalization, stress assignment, and intonation patterns efficiently.

How Does Kitten TTS Compare to Other Solutions?

Traditional engines like eSpeak occupy similar size ranges but often produce more robotic-sounding speech. Neural alternatives from major tech companies deliver superior quality but require 500MB to several gigabytes of storage.

The Kitten models aim for a middle ground. They provide more natural speech than classical concatenative systems while remaining orders of magnitude smaller than high-end neural TTS. Audio quality falls between basic synthesizers and premium cloud services.

What Do Performance Benchmarks Show?

Real-world testing shows promising results. The Nano model generates speech at approximately 0.8x real-time speed on a Raspberry Pi 4. The Standard model achieves 1.5x real-time on the same hardware.

Modern smartphones handle all three models comfortably with room for other processing tasks. Memory usage during inference stays under 100MB for all models. This footprint allows multiple instances to run simultaneously on devices with 2GB RAM or more. Battery consumption remains reasonable for mobile applications, comparable to audio playback rather than heavy computation.

How Can Developers Get Started with Kitten TTS?

Developers can integrate these models through straightforward APIs. The system supports common programming languages including Python, JavaScript, and C++. Installation requires downloading the model file and a small runtime library that handles inference.

The basic workflow involves passing text strings to the synthesis function and receiving audio data in return. Output formats include WAV and raw PCM, suitable for immediate playback or further processing. Configuration options let developers adjust speaking rate, add pauses, and control other prosodic features.

Which Model Should You Choose for Your Project?

Choosing the right model depends on specific project requirements. Applications prioritizing minimal size should start with Kitten Nano. Projects where audio quality matters more can use Kitten Standard.

The Lite version serves as a versatile middle option for most use cases. Licensing terms permit commercial use without royalties. The open-source nature encourages community contributions and custom modifications. Developers can fine-tune models on specific voices or languages if needed.

What Does the Future Hold for Compact TTS?

The release of these models signals broader trends in AI optimization. Research continues into even smaller architectures and more efficient training methods. Multilingual support will likely expand, bringing compact TTS to more languages and accents.

On-device personalization represents another frontier. Future versions might learn user preferences or adapt to specific speaking styles without cloud uploads. This capability would enhance both privacy and user experience.

How Will the Community Shape TTS Development?

As adoption increases, expect supporting tools and resources to emerge. Voice customization utilities, pronunciation dictionaries, and integration plugins will make implementation easier. The developer community will likely share optimizations and use case examples.

The success of compact models like Kitten TTS may influence other AI domains. Similar optimization techniques could shrink computer vision models, natural language processing systems, and other neural networks for edge deployment.

Conclusion: The New Era of Accessible Speech Synthesis

The three new Kitten TTS models demonstrate that high-quality speech synthesis no longer requires massive computational resources. With sizes ranging from under 25MB to 100MB, these models make voice output practical for embedded systems, mobile apps, and offline devices.

Continue learning: Next, explore tim cook squashes retirement rumors: staying at apple

They balance audio quality against efficiency, opening up applications previously limited by storage and processing constraints. Developers gain new options for adding voice interfaces without cloud dependencies or hefty resource requirements. As optimization techniques advance, expect even more capable models in similarly compact packages.

Related Articles

Transforming Gaza: From Conflict Zone to Tech Hub

A leaked plan from the Trump administration reveals a bold strategy to turn Gaza into a thriving high-tech hub. Discover the potential.

Sep 3, 2025

Gaza's Tech Revolution: Trump's Bold High-Tech Vision

A leaked plan from the Trump administration unveils a vision to make Gaza a high-tech hub, focusing on AI, cybersecurity, and digital innovation.

Sep 3, 2025

Tech's Role in Tracking Indonesian Protest Dynamics

A deep dive into the technological aspects of Indonesian protests, highlighting the role of social media, AI, and cybersecurity in modern civil unrest.

Sep 2, 2025

Comments

Loading comments...