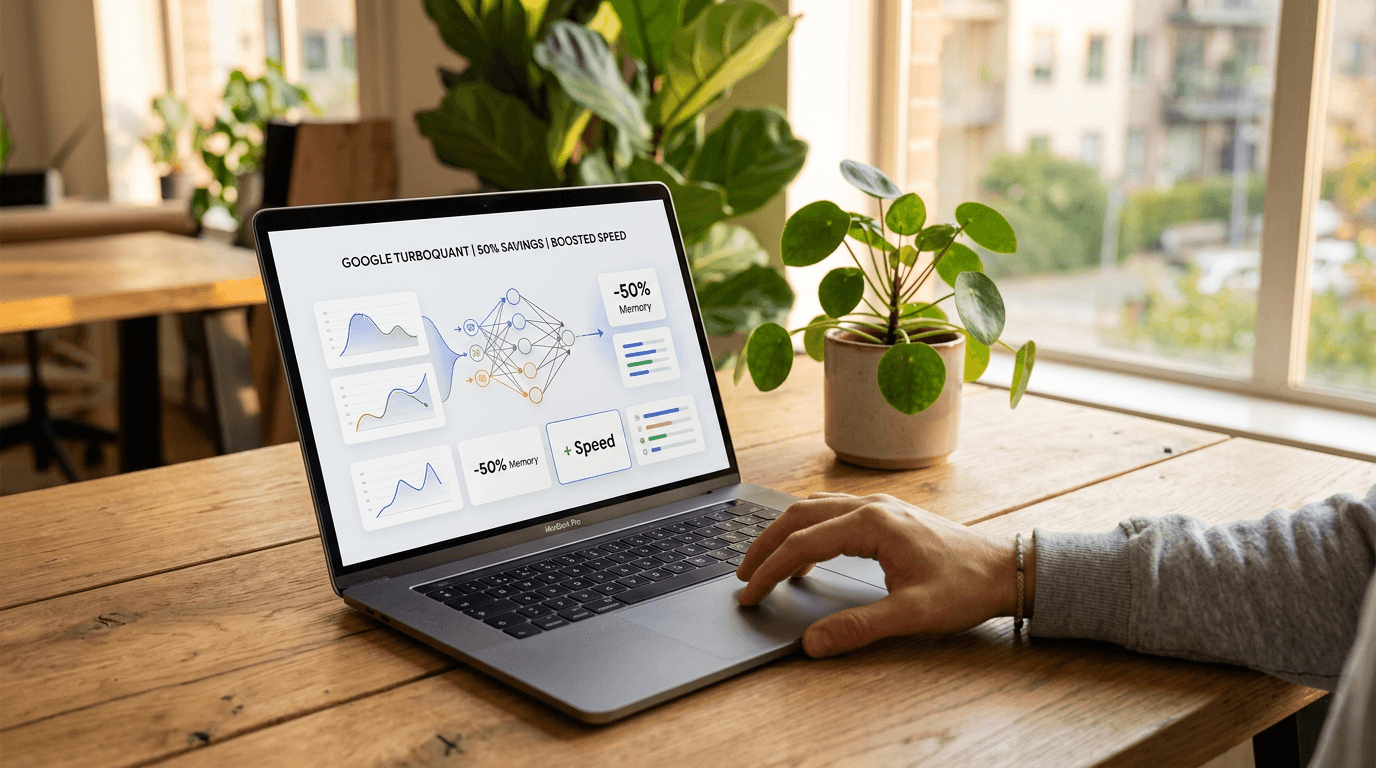

Google TurboQuant Cuts AI Memory Costs 50% and Boosts Spe...

Google Research released TurboQuant, a breakthrough algorithm that compresses AI memory by 6x and accelerates processing 8x, offering enterprises immediate cost savings exceeding 50% without retraining.

How Google's TurboQuant Algorithm Transforms AI Economics

Learn more about apple's ai captions images better than 10x larger models

The artificial intelligence industry faces a brutal paradox. As language models grow more capable of processing massive documents and complex conversations, they hit a hardware wall that threatens to make these advances economically unviable.

Google's TurboQuant algorithm, released this week as open-source research, offers enterprises a solution that cuts AI memory requirements by 6x and accelerates processing by 8x. This breakthrough potentially slashes operational costs by more than 50%.

For business leaders investing millions in AI infrastructure, this represents more than an incremental improvement. TurboQuant addresses the "Key-Value cache bottleneck" that forces companies to choose between capability and cost, offering a software-only fix that works with existing hardware and models.

Why Do AI Models Face a Memory Crisis?

Every word a language model processes gets stored as a high-dimensional vector in GPU video random access memory. This "digital cheat sheet" enables models to maintain context across long conversations or documents.

The problem? This cache swells rapidly, consuming expensive VRAM and throttling performance.

Traditional compression methods create their own problems. When high-precision decimals get compressed into integers, quantization errors accumulate until models hallucinate or lose coherence. Most methods require "quantization constants" as metadata, adding 1-2 bits per number. This overhead often negates compression gains entirely, leaving enterprises stuck paying premium prices for high-bandwidth memory.

How Does TurboQuant Solve the Compression Problem?

Google's solution employs a two-stage mathematical framework that eliminates the traditional compression tax. The first stage, PolarQuant, reimagines how vectors get mapped in high-dimensional space.

Instead of standard Cartesian coordinates, it converts vectors into polar coordinates with a radius and angles. After a random rotation, these angle distributions become highly predictable and concentrated. Because the data shape is now known, the system eliminates expensive normalization constants that traditional methods must store for every data block.

For a deep dive on github copilot data policy update: what devs need to know, see our full guide

The second stage applies a 1-bit Quantized Johnson-Lindenstrauss transform to residual errors. By reducing each error to a sign bit (+1 or -1), this acts as a zero-bias estimator. When models calculate attention scores to determine which prompt words matter most, the compressed version remains statistically identical to the high-precision original.

What Performance Gains Can Enterprises Expect?

For a deep dive on reddit's human verification: fighting bots in 2025, see our full guide

The "Needle-in-a-Haystack" benchmark tests whether AI can find a specific sentence hidden within 100,000 words. TurboQuant achieved perfect recall scores across open-source models like Llama-3.1-8B and Mistral-7B while reducing KV cache memory by at least 6x.

This quality neutrality is rare in extreme quantization, where 3-bit systems typically suffer significant logic degradation. Beyond chatbots, TurboQuant transforms high-dimensional search operations.

Modern semantic search compares meanings across billions of vectors rather than matching keywords. TurboQuant consistently achieves superior recall ratios compared to existing methods like RabbiQ and Product Quantization, with virtually zero indexing time.

How Fast Does TurboQuant Run in Production?

On NVIDIA H100 accelerators, TurboQuant's 4-bit implementation achieved an 8x performance boost in computing attention logits. This represents a critical speedup for production deployments where response time directly impacts user experience and operational costs.

Technical analyst Prince Canuma implemented TurboQuant in MLX to test the Qwen3.5-35B model. Across context lengths from 8,500 to 64,000 tokens, he reported 100% exact match at every quantization level. The 2.5-bit TurboQuant configuration reduced KV cache by nearly 5x with zero accuracy loss, validating Google's internal research on third-party models.

How Should Business Leaders Implement TurboQuant?

Unlike many AI breakthroughs requiring costly retraining or specialized datasets, TurboQuant is training-free and data-oblivious. Organizations can apply these quantization techniques to existing fine-tuned models based on Llama, Mistral, or Gemma to realize immediate memory savings without risking specialized performance.

What Are the Four Critical Action Steps?

Enterprise IT and DevOps teams should consider these implementation priorities:

Optimize Inference Pipelines: Integrating TurboQuant into production servers can reduce GPU requirements for long-context applications, potentially cutting cloud compute costs by 50% or more.

Expand Context Capabilities: Organizations working with massive internal documentation can now offer much longer context windows for retrieval-augmented generation tasks without prohibitive VRAM overhead.

Enhance Local Deployments: For companies with strict data privacy requirements, TurboQuant makes it feasible to run large-scale models on on-premise hardware or edge devices previously insufficient for 32-bit or 8-bit model weights.

Re-Evaluate Hardware Procurement: Before investing in massive HBM-heavy GPU clusters, operations leaders should assess how much bottleneck can be resolved through software-driven efficiency gains.

What Impact Does TurboQuant Have on the AI Market?

The release triggered immediate market movements. Following Tuesday's announcement, analysts observed downward trends in major memory supplier stocks, including Micron and Western Digital.

The market reaction reflects recognition that if AI giants can compress memory requirements by 6x through software alone, insatiable demand for high-bandwidth memory may face algorithmic efficiency constraints. Community response combined technical validation with practical experimentation.

Google's original announcement generated over 7.7 million views on X, signaling industry hunger for memory crisis solutions. Within 24 hours, developers began porting the algorithm to popular local AI libraries like MLX for Apple Silicon and llama.cpp.

How Does TurboQuant Democratize AI Access?

Several analysts highlighted how TurboQuant narrows the gap between free local AI and expensive cloud subscriptions. Models running locally on consumer hardware like Mac Minis can now handle 100,000-token conversations without typical quality degradation.

This democratization enables smaller enterprises to deploy sophisticated AI capabilities without enterprise-scale budgets. Organizations previously priced out of advanced AI applications can now compete with larger competitors.

Why Did Google Release This Technology as Open Source?

The timing proves strategic, coinciding with upcoming presentations at the International Conference on Learning Representations 2026 in Rio de Janeiro and the Annual Conference on Artificial Intelligence and Statistics 2026 in Tangier. By releasing methodologies under an open research framework, Google provides essential infrastructure for the emerging "Agentic AI" era.

This approach serves multiple business objectives. It establishes Google as a thought leader in AI efficiency while creating ecosystem effects that benefit Google Cloud services. As more organizations adopt these techniques, they generate demand for complementary Google technologies and services.

What Does TurboQuant Mean for AI Economics?

TurboQuant suggests the next AI progress era will be defined as much by mathematical elegance as brute force. The industry is shifting from "bigger models" to "better memory," a change that could lower AI serving costs globally.

Some analysts reference Jevons' Paradox, noting that efficiency gains often increase total consumption rather than decrease it. As AI becomes cheaper to run, organizations may deploy it more extensively, potentially maintaining or even increasing total memory demand despite per-application efficiency gains.

What Are the Long-Term Competitive Implications?

Enterprises that quickly adopt TurboQuant-style optimizations gain competitive advantages in two dimensions. First, they reduce operational costs immediately, improving margins on AI-powered services.

Second, they can offer more sophisticated features at price points competitors cannot match without similar optimizations. This creates a temporary moat for early adopters until the broader market catches up.

Organizations delaying implementation risk falling behind competitors who can deliver superior AI experiences at lower costs. The window for competitive advantage remains open but will narrow as adoption spreads.

Should Your Organization Implement TurboQuant Now?

TurboQuant proves that AI limitations extend beyond transistor counts to how elegantly we translate information complexity into digital bits. For enterprises, this represents more than academic research - it is a tactical unlock that transforms existing hardware into significantly more powerful assets.

The algorithm's training-free, open-source nature removes typical adoption barriers. Organizations can implement these techniques immediately without retraining models or purchasing new hardware.

With potential cost reductions exceeding 50% and performance improvements reaching 8x, TurboQuant offers one of the highest ROI opportunities in current AI infrastructure optimization. Business leaders should evaluate how these efficiency gains reshape their AI strategies, from cloud spending to on-premise capabilities.

Continue learning: Next, explore was 2025 really the year of ai agents? a developer's take

The companies that move quickly to integrate these optimizations will define the next competitive landscape in AI-powered services. Your organization's AI economics just changed - the question is whether you'll capitalize on it before your competitors do.

Related Articles

Acer's New Chromebook: A Game-Changer for Businesses?

Acer's Chromebook Plus Spin 514 combines AI and potent computing, offering businesses a glimpse into the future of work.

Sep 5, 2025

AI Revolution: Making Autonomous Vehicles 99% Safer

Explore the groundbreaking AI technology that promises to prevent 99% of accidents in autonomous vehicles, marking a new era in road safety.

Sep 6, 2025

AI in Cybersecurity: A Surge in Software Spending

Software commands 40% of cybersecurity budgets, with AI and ML defenses critical in the fast-paced battle against cyber threats.

Sep 7, 2025