Google's Always On Memory Agent Ditches Vector Databases

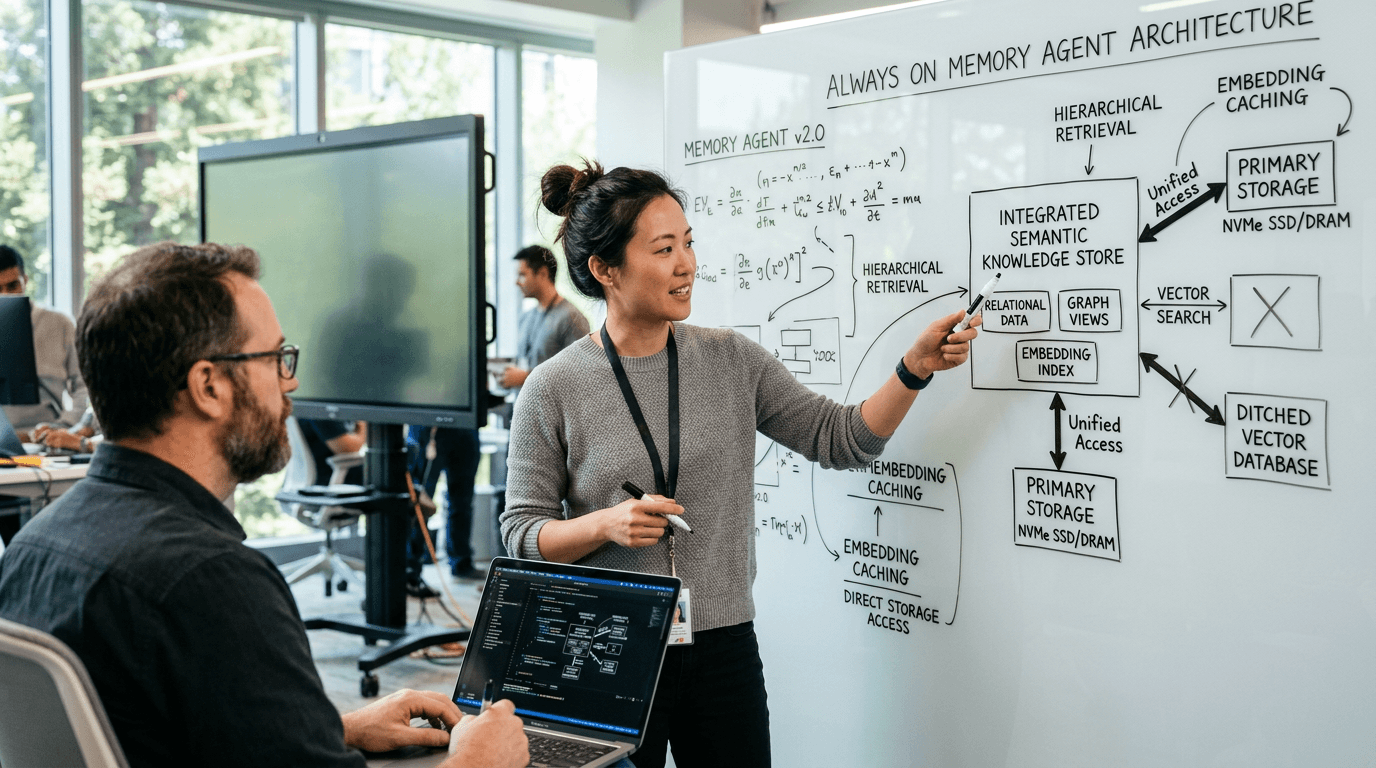

Google's Shubham Saboo released an open-source Always On Memory Agent that replaces vector databases with LLM-driven persistent memory, signaling a shift in agent infrastructure design.

Google Releases Always On Memory Agent That Challenges Traditional AI Architecture

Learn more about moongate: ultima online server emulator in .net 10

Google senior AI product manager Shubham Saboo just published an open-source "Always On Memory Agent" that tackles one of the hardest problems in AI development: giving agents reliable, persistent memory without the complexity of traditional vector databases. Released on the official Google Cloud Platform Github page under an MIT License, the project demonstrates a fundamentally different approach to how AI systems remember and retrieve information.

For enterprise developers building support systems, research assistants, and workflow automation, this release matters less as a finished product and more as a signal about where agent infrastructure is headed. The architecture suggests that simpler, LLM-driven memory systems may replace the embedding pipelines and vector storage that currently dominate retrieval stacks.

How Does This Memory Agent Differ From Traditional Approaches?

Google built the Always On Memory Agent with the Agent Development Kit (ADK), introduced in Spring 2025, and Gemini 3.1 Flash-Lite, a low-cost model Google launched on March 3, 2026. The system runs continuously, ingests files or API input, stores structured memories in SQLite, and performs scheduled memory consolidation every 30 minutes by default.

The repository frames its design with a deliberately provocative claim: "No vector database. No embeddings. Just an LLM that reads, thinks, and writes structured memory."

What Powers the Architecture?

The agent uses a multi-agent internal architecture with specialist components handling ingestion, consolidation, and querying. A local HTTP API and Streamlit dashboard come included. The system supports text, image, audio, video, and PDF ingestion.

This design choice simplifies prototypes and reduces infrastructure sprawl, especially for smaller or medium-memory agents. Traditional retrieval stacks require separate embedding pipelines, vector storage infrastructure, indexing logic and maintenance, synchronization work across components, and dedicated monitoring and scaling systems.

Saboo's approach shifts the performance question from vector search overhead to model latency, memory compaction logic, and long-run behavioral stability. That tradeoff carries real economic implications.

For a deep dive on plasma bigscreen: kde's 10-foot interface revolution, see our full guide

Why Does Gemini Flash-Lite Make Always-On Memory Economically Viable?

A 24/7 service that periodically re-reads, consolidates, and serves memory needs predictable latency and low enough inference cost to avoid making "always on" prohibitively expensive. Gemini 3.1 Flash-Lite delivers exactly that profile.

For a deep dive on wga approves bargaining agenda on health care, pay, ai, see our full guide

Google built Flash-Lite for high-volume developer workloads at scale and priced it at $0.25 per 1 million input tokens and $1.50 per 1 million output tokens. The company claims Flash-Lite runs 2.5 times faster than Gemini 2.5 Flash in time to first token and delivers a 45% increase in output speed while maintaining similar or better quality.

What Performance Numbers Matter Most?

On Google's published benchmarks, Flash-Lite posts an Elo score of 1432 on Arena.ai, 86.9% on GPQA Diamond, and 76.8% on MMMU Pro. Google positions these characteristics as a fit for high-frequency tasks such as translation, moderation, UI generation, and simulation.

Those numbers help explain why Flash-Lite pairs well with a background-memory agent. The economics work when your agent consolidates memories every 30 minutes without burning through your infrastructure budget.

What Should Enterprise Teams Know About Agent Development Kit?

Google's ADK documentation positions the framework as model-agnostic and deployment-agnostic, with support for workflow agents, multi-agent systems, tools, evaluation, and deployment targets including Cloud Run and Vertex AI Agent Engine. That combination makes the memory agent feel less like a one-off demo and more like a reference point for a broader agent runtime strategy.

The always-on memory agent is interesting on its own, but the larger message is that Saboo is trying to make agents feel like deployable software systems rather than isolated prompts. In that framing, memory becomes part of the runtime layer, not just an add-on feature.

Does This Work for Multi-Agent Systems?

The supplied materials do not clearly establish a broader claim that this is a shared memory framework for multiple independent agents. ADK as a framework supports multi-agent systems, but this specific repo is best described as an always-on memory agent built with specialist subagents and persistent storage.

That distinction matters for enterprise architects evaluating whether this approach fits their use case. Even at this narrower level, it addresses a core infrastructure problem many teams are actively working through.

What Governance Challenges Does Persistent Memory Create?

Public reaction to the release highlights why enterprise adoption of persistent memory will not hinge on speed or token pricing alone. Several responses on X raised exactly the concerns enterprise architects are likely to flag.

Franck Abe called Google ADK and 24/7 memory consolidation "brilliant leaps for continuous agent autonomy," but warned that an agent "dreaming" and cross-pollinating memories in the background without deterministic boundaries becomes "a compliance nightmare." ELED made a related point, arguing that the main cost of always-on agents is not tokens but "drift and loops."

What Governance Questions Must Teams Address?

Those critiques go directly to the operational burden of persistent systems. Who can write memory and under what conditions? What gets merged during consolidation cycles?

How does retention work and when are memories deleted? How do teams audit what the agent learned over time? What happens when memories conflict or become stale?

The provided materials do not include enterprise-grade compliance controls specific to this memory agent, such as deterministic policy boundaries, retention guarantees, segregation rules, or formal audit workflows. For now, the repo reads as a compelling engineering template rather than a complete enterprise memory platform.

Will LLM-Driven Memory Scale Beyond Small Contexts?

Another reaction, from Iffy, challenged the repo's "no embeddings" framing, arguing that the system still has to chunk, index, and retrieve structured memory. The criticism suggests the approach may work well for small-context agents but break down once memory stores become much larger.

That criticism is technically important. Removing a vector database does not remove retrieval design; it changes where the complexity lives.

What Tradeoffs Should Enterprise Developers Consider?

For developers, the tradeoff is less about ideology than fit. A lighter stack may be attractive for low-cost, bounded-memory agents serving specific use cases.

Larger-scale deployments may still demand stricter retrieval controls, more explicit indexing strategies, and stronger lifecycle tooling. What Saboo has not shown yet includes a direct Flash-Lite versus Anthropic Claude Haiku benchmark for agent loops in production use. That comparison would help enterprise teams make informed build-versus-buy decisions.

What Does This Release Signal About Agent Infrastructure Trends?

The release lands at the right time. Enterprise AI teams are moving beyond single-turn assistants and into systems expected to remember preferences, preserve project context, and operate across longer horizons.

Saboo's open-source memory agent offers a concrete starting point for that next layer of infrastructure, and Flash-Lite gives the economics some credibility. The MIT License allows for commercial usage, which removes a common barrier to enterprise experimentation.

What Should Business Leaders Take Away?

First, simpler agent architectures may reduce operational complexity and infrastructure costs for bounded use cases. Second, the economics of always-on agents now work at scale if you choose the right model and memory strategy. Third, governance and compliance controls will determine enterprise adoption more than raw capability.

The strongest takeaway from the reaction around the launch is that continuous memory will be judged on governance as much as capability. That is the real enterprise question behind Saboo's demo: not whether an agent can remember, but whether it can remember in ways that stay bounded, inspectable, and safe enough to trust in production.

Where Does Agent Memory Go From Here?

Saboo's Always On Memory Agent represents a practical engineering approach to persistent memory that challenges conventional wisdom about vector databases. The combination of ADK, Flash-Lite, and a simplified architecture makes continuous memory economically viable for more use cases.

But the path to enterprise adoption runs through governance, not just performance benchmarks. Teams evaluating this approach should focus on how memory consolidation, retention, and audit controls fit their compliance requirements. The repo provides a strong technical foundation, but production deployments will require additional guardrails.

Continue learning: Next, explore ai jobs at risk: anthropic study reveals vulnerable careers

For developers building support systems, research assistants, or workflow automation, this release offers a valuable reference implementation and a glimpse of where Google is taking agent infrastructure. Whether LLM-driven memory replaces vector databases broadly or serves as a complementary approach for specific use cases remains to be seen. What is clear is that persistent memory is moving from research challenge to deployable infrastructure, and the governance questions are just beginning.

Related Articles

Acer's New Chromebook: A Game-Changer for Businesses?

Acer's Chromebook Plus Spin 514 combines AI and potent computing, offering businesses a glimpse into the future of work.

Sep 5, 2025

Sony's Paywall Strategy: Xperia Feature Sparks Debate

Sony's recent strategy to lock a key Xperia feature behind a subscription has sparked a debate on innovation versus monetization.

Sep 4, 2025

Unlocking Minds: The Rise of Neural Interface Tech

Delve into Neural Interface Technology, where human thoughts directly control digital devices, opening new possibilities in healthcare and beyond.

Sep 6, 2025

Comments

Loading comments...