- Home

- Technology

- GitHub's Historic Uptime: How the Platform Stays Online

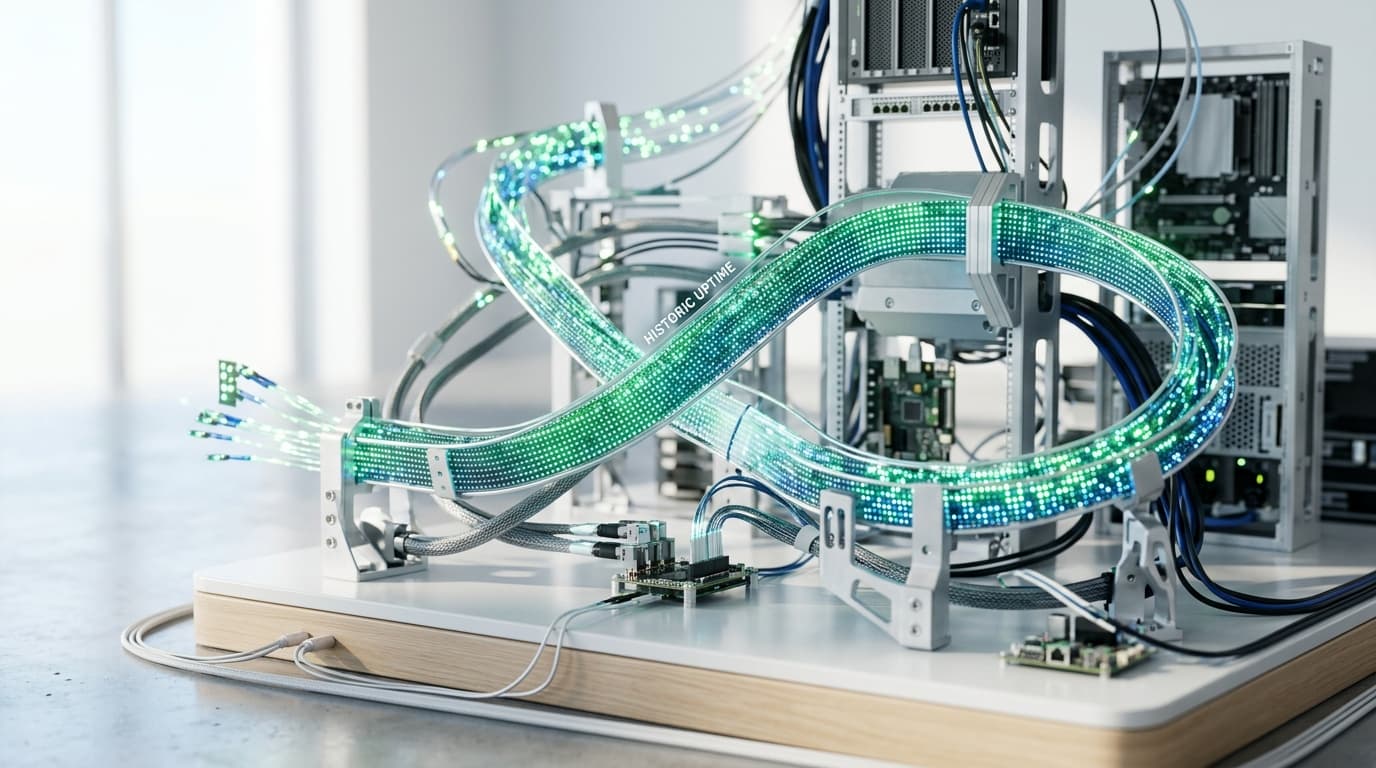

GitHub's Historic Uptime: How the Platform Stays Online

GitHub hosts over 100 million repositories and serves millions of developers daily. The platform's remarkable uptime record sets the standard for developer infrastructure reliability.

Understanding GitHub's Historic Uptime Performance

Learn more about apple declares three products vintage or obsolete

GitHub's historic uptime stands as a testament to modern infrastructure engineering. The platform hosts over 100 million repositories and serves millions of developers across the globe every single day.

When GitHub goes down, development workflows grind to a halt worldwide. This makes reliability not just important but absolutely critical for the platform's success.

The platform has maintained an average uptime of 99.95% or higher for most of its operational history. This translates to less than 4.5 hours of downtime per year, a remarkable achievement for a service handling billions of requests daily. GitHub's commitment to availability has shaped how developers think about infrastructure reliability and set industry benchmarks that competitors strive to match.

What Makes GitHub's Infrastructure So Reliable?

GitHub's reliability stems from a multi-layered approach to infrastructure design. The platform runs on a distributed architecture that spans multiple data centers and availability zones.

This geographic distribution ensures that if one location experiences issues, traffic automatically routes to healthy regions. Users experience no interruption during these failovers.

The company invested heavily in redundancy at every level of the stack. Database replicas maintain synchronized copies of critical data across different physical locations. Load balancers distribute traffic intelligently to prevent any single server from becoming overwhelmed.

Network connections utilize multiple internet service providers to eliminate single points of failure. This redundancy protects GitHub from provider-specific outages.

Microsoft's acquisition of GitHub in 2018 brought additional resources and Azure cloud infrastructure expertise. This partnership enabled GitHub to leverage enterprise-grade reliability features while maintaining its independent operational identity. The integration enhanced GitHub's disaster recovery capabilities and expanded its global footprint significantly.

How Does GitHub Monitor System Performance?

GitHub employs sophisticated monitoring systems that track thousands of metrics in real-time. These systems detect anomalies before they escalate into service disruptions.

Engineers receive automated alerts when performance metrics deviate from expected baselines. This enables rapid response to potential issues before users notice problems.

For a deep dive on some dinosaurs could rise up like giants until too big, see our full guide

The platform's status page provides transparent communication during incidents. Users can view real-time service health across different GitHub features including Git operations, API requests, webhooks, and GitHub Actions.

This transparency builds trust and helps development teams plan around any temporary service degradations. Teams know exactly what's working and what's not.

For a deep dive on thinklabs ai raises $28m to solve power grid challenges, see our full guide

Observability tools allow GitHub's engineering teams to trace requests through the entire system. When issues occur, engineers can quickly identify bottlenecks and root causes. This capability dramatically reduces mean time to resolution (MTTR) and prevents minor glitches from becoming major outages.

How Does GitHub Handle Traffic Spikes?

GitHub experiences massive traffic variations throughout the day as developers in different time zones begin their workdays. The platform must scale seamlessly to handle these predictable patterns plus unexpected surges from viral repositories or major open-source releases.

Auto-scaling mechanisms automatically provision additional computing resources during high-demand periods. These systems monitor queue depths, response times, and resource utilization to make intelligent scaling decisions.

When traffic subsides, resources scale down to optimize costs without impacting performance. This dynamic approach maintains GitHub uptime while controlling infrastructure expenses.

Content delivery networks (CDNs) cache static assets and frequently accessed repositories closer to users. This distributed caching reduces load on origin servers and improves response times for users worldwide. CDN edge locations serve as a first line of defense against traffic spikes and distributed denial-of-service attacks.

What Can We Learn from GitHub's Notable Outages?

Even with exceptional uptime, GitHub has experienced several notable outages that provided valuable lessons. In October 2018, a network partition between East Coast data centers caused a 24-hour service degradation.

This incident prompted significant improvements to GitHub's data consistency protocols and failover procedures. The company turned a negative event into an opportunity for growth.

The company published detailed post-mortems after major incidents. These transparent reports explain what went wrong, why it happened, and what changes prevent recurrence.

The development community appreciates this openness. It demonstrates accountability and commitment to continuous improvement.

GitHub's incident response process follows established protocols:

- Immediate detection through automated monitoring

- Rapid escalation to on-call engineering teams

- Clear communication via status page updates

- Systematic troubleshooting using runbooks and playbooks

- Post-incident analysis and remediation planning

How Do Site Reliability Engineers Maintain GitHub Uptime?

Technology alone cannot guarantee uptime. GitHub employs dedicated site reliability engineers (SREs) who focus exclusively on system stability and performance.

These specialists bridge the gap between development and operations. They ensure that new features launch without compromising reliability.

On-call rotations ensure that experienced engineers are always available to respond to incidents. These rotations distribute the burden fairly while maintaining coverage across all time zones. Regular training and simulation exercises keep response skills sharp and procedures up-to-date.

Chaos engineering practices intentionally introduce failures into production systems during controlled conditions. These experiments validate that redundancy mechanisms work as designed and reveal unexpected failure modes. GitHub runs regular game days where teams practice responding to simulated disasters.

How Does GitHub's Uptime Compare to Industry Standards?

The software industry typically measures availability using "nines" of uptime. Three nines (99.9%) allows for roughly 8.7 hours of downtime annually.

Four nines (99.99%) permits only 52 minutes. Five nines (99.999%) restricts downtime to just 5.26 minutes per year.

GitHub consistently achieves four nines of availability, placing it among the most reliable developer platforms. This performance matches or exceeds major cloud providers like AWS, Google Cloud, and Azure.

For a platform serving such a critical role in software development, this reliability level is essential. Developers need confidence that their code repositories remain accessible.

Competitors like GitLab and Bitbucket face similar reliability challenges. The entire industry has elevated its standards as developers increasingly depend on cloud-based tools. Any platform hoping to compete for enterprise customers must demonstrate comparable uptime metrics and incident response capabilities.

What Can Developers Learn from GitHub's Reliability Approach?

GitHub's reliability engineering practices offer valuable lessons for development teams building their own applications. Start by implementing comprehensive monitoring before problems occur.

You cannot fix what you cannot measure. Early detection prevents minor issues from becoming catastrophic failures.

Design for failure from the beginning. Assume that components will fail and build redundancy into your architecture.

Use load balancers, database replicas, and multi-region deployments to eliminate single points of failure. Test your failover mechanisms regularly to ensure they work when needed.

Document your incident response procedures before emergencies happen. Clear runbooks help teams respond quickly and consistently during high-stress situations. Practice your response through regular drills and post-mortems that focus on learning rather than blame.

What Does the Future Hold for GitHub's Infrastructure?

GitHub continues investing in infrastructure improvements to maintain its reliability leadership. The platform is expanding its use of edge computing to bring services closer to users.

This geographic distribution reduces latency and improves resilience against regional outages. Users benefit from faster response times regardless of location.

Artificial intelligence and machine learning increasingly play roles in reliability engineering. Predictive analytics can identify potential failures before they occur by recognizing patterns in system metrics.

Automated remediation can resolve common issues without human intervention. This reduces MTTR significantly and improves overall GitHub uptime.

The rise of GitHub Codespaces and other cloud-based development environments creates new reliability challenges. These services require even higher availability standards since they directly impact developer productivity in real-time. GitHub's infrastructure must evolve to support these demanding workloads while maintaining its historic uptime standards.

Why Does GitHub's Uptime Matter to Developers?

GitHub's historic uptime represents more than impressive statistics. It reflects a fundamental commitment to the millions of developers who depend on the platform daily.

The company's transparent communication, robust infrastructure, and continuous improvement mindset set industry standards. These practices benefit the entire software development ecosystem.

Developers and organizations can trust GitHub to be available when needed. This reliability enables teams to focus on building great software rather than worrying about infrastructure stability.

Continue learning: Next, explore marvell stock pops 8% as nvidia takes $2b stake

As software development becomes increasingly collaborative and cloud-based, platforms like GitHub must maintain exceptional uptime. The global developer community depends on this reliability to work effectively across time zones and geographies.

Related Articles

Transforming Gaza: From Conflict Zone to Tech Hub

A leaked plan from the Trump administration reveals a bold strategy to turn Gaza into a thriving high-tech hub. Discover the potential.

Sep 3, 2025

Gaza's Tech Revolution: Trump's Bold High-Tech Vision

A leaked plan from the Trump administration unveils a vision to make Gaza a high-tech hub, focusing on AI, cybersecurity, and digital innovation.

Sep 3, 2025

AI's Role in Unveiling ICE Officers' Identities

AI unmasking ICE officers underscores a shift towards transparent law enforcement, raising questions about privacy and ethics in the digital age.

Sep 2, 2025

Comments

Loading comments...