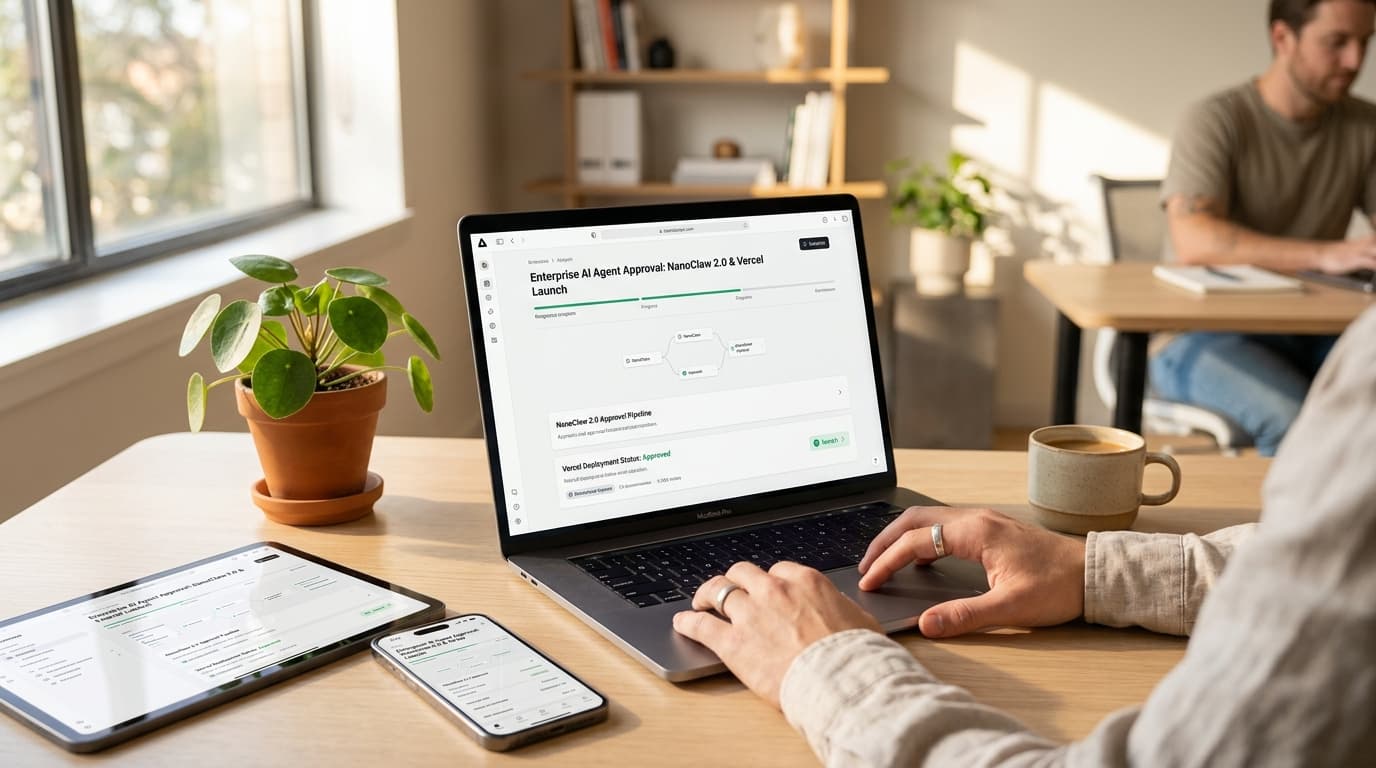

Enterprise AI Agent Approval: NanoClaw 2.0 & Vercel Launch

NanoClaw 2.0 eliminates the risky tradeoff between useful AI agents and security. The new partnership with Vercel brings human approval workflows to 15 messaging platforms.

The Enterprise AI Agent Security Dilemma Ends Today

Learn more about apple downgrading iphone 18 to cut costs: what we know

For the past year, businesses adopting autonomous AI agents faced an impossible choice. Keep the agent locked in a useless sandbox, or hand it full API access and pray it does not hallucinate a catastrophic command. Neither option worked for enterprises needing real productivity gains without existential risk.

That calculation changed when NanoClaw 2.0 launched its partnership with Vercel and OneCLI. The collaboration introduces infrastructure-level approval systems that let enterprise AI agents propose actions while requiring explicit human consent before execution. The approval requests arrive as native cards in the 15 messaging apps where knowledge workers already spend their days.

AI agents only become valuable when they can take meaningful actions. Scheduling meetings, triaging emails, or managing cloud infrastructure requires write access to sensitive systems. Until now, granting that access meant accepting unacceptable risk.

Why Do Traditional AI Agent Security Models Fail Enterprises?

Most agent frameworks rely on application-level security, where the AI model itself asks for permission before acting. Gavriel Cohen, co-founder of NanoCo (the startup behind NanoClaw), calls this approach fundamentally flawed.

"The agent could potentially be malicious or compromised," Cohen explained. "If the agent is generating the UI for the approval request, it could trick you by swapping the 'Accept' and 'Reject' buttons."

This vulnerability stems from treating the AI as both the actor and the security gatekeeper. Complex frameworks like OpenClaw grew to nearly 400,000 lines of code trying to solve this problem, creating what VentureBeat called a "security nightmare" that no human could fully audit. The credential access problem proved equally intractable.

To function effectively, agents needed raw API keys with broad permissions. Once granted, nothing prevented an errant prompt injection from triggering destructive commands. IT departments blocked agent adoption entirely rather than accept this exposure.

How Does NanoClaw 2.0 Enforce Infrastructure-Level Security?

NanoClaw's solution moves security enforcement completely outside the agent's control. Every agent runs inside strictly isolated Docker or Apple Containers, with zero access to real credentials. The agent operates using placeholder keys that trigger security checks when used.

When an agent attempts any outbound request, the OneCLI Rust Gateway intercepts it before it reaches the internet. The gateway evaluates the action against user-defined policies. Read-only requests might pass automatically, while sensitive operations like sending emails or modifying infrastructure trigger approval workflows.

For a deep dive on john ternus to become apple ceo: leadership shift, see our full guide

The technical architecture ensures the agent structurally cannot bypass oversight:

- Agents run in OS-level isolated containers with limited filesystem access

- Real API keys never enter the container environment

- The Rust Gateway sits between the agent and all external services

- Approval requests generate outside the agent's control

- Only after human approval does the gateway inject real credentials

For a deep dive on ai method captures long-range atomic interactions, see our full guide

This approach confines the "blast radius" of potential prompt injections strictly to the container. Even a completely compromised agent cannot access systems without triggering the approval workflow.

What Makes NanoClaw's Codebase Auditable?

NanoClaw condensed its core logic into roughly 500 lines of TypeScript across just 15 source files. The entire codebase totals approximately 3,900 lines, compared to hundreds of thousands in competing frameworks. This minimalism serves security directly.

According to NanoClaw's documentation, the entire system can be audited by a human or secondary AI in approximately eight minutes. For security-conscious enterprises requiring code review before deployment, this represents a practical path to verification. The framework rejects feature bloat in favor of a "Skills over Features" philosophy.

Instead of maintaining unused modules, users contribute modular instructions that teach local AI assistants how to customize the codebase for specific needs. Users only maintain the exact code their implementation requires.

How Does Vercel Chat SDK Bring Human-in-the-Loop to 15 Messaging Platforms?

Security infrastructure means nothing without usable interfaces. Integrating with messaging platforms has historically required custom code for each app's unique API. Slack uses different interactive elements than Teams, which differs from WhatsApp.

Vercel's Chat SDK solves this through a unified TypeScript interface. NanoClaw 2.0 can now deploy approval workflows to 15 different channels from a single codebase. When an agent needs permission for a protected action, the user receives a rich interactive card on their preferred platform.

"The approval shows up as a rich, native card right inside Slack or WhatsApp or Teams, and the user taps once to approve or deny," Cohen said. This seamless UX transforms human oversight from a productivity bottleneck into a one-tap decision.

The 15 supported messaging platforms include:

- Slack, Microsoft Teams, and Webex for enterprise collaboration

- WhatsApp, Telegram, and iMessage for mobile communication

- Discord and Matrix for developer communities

- Google Chat, Facebook Messenger, and Instagram for consumer platforms

- X (Twitter), GitHub, and Linear for workflow integration

- Email for universal compatibility

The iMessage integration, built on Vercel's Photon project, addresses a specific enterprise pain point. Previously, connecting agents to iMessage required maintaining a separate Mac Mini server. The new integration eliminates this infrastructure requirement.

Where Do Approval Workflows Matter Most?

The approval system targets scenarios involving high-consequence "write" actions where automation provides value but errors carry significant cost.

DevOps and Cloud Infrastructure

An AI agent monitoring cloud infrastructure can detect scaling opportunities or security vulnerabilities. Under NanoClaw 2.0, the agent proposes the infrastructure change with full context. A senior engineer receives the proposal in Slack, reviews the specifics, and approves or rejects with one tap.

This workflow preserves the speed advantage of AI-driven monitoring while ensuring humans make final decisions on production changes. The agent becomes a tireless analyst who never implements changes without oversight.

Finance and Payment Processing

Finance teams handle high-volume, repetitive tasks where errors have immediate monetary consequences. An agent can analyze invoices, flag discrepancies, and prepare batch payments. The final disbursement requires human signature via a WhatsApp card showing transaction details.

The OneCLI dashboard lets finance teams set granular policies. An agent might freely read financial data and generate reports, but any action that moves money triggers mandatory approval. This mirrors existing corporate security protocols around dual authorization.

Email and Calendar Management

Executive assistants spend significant time on email triage and calendar coordination. An AI agent can draft responses, categorize messages, and propose meeting times. Under approval workflows, the agent can read freely but must request permission before sending emails or accepting calendar invites.

This transforms the AI into a highly capable junior assistant who always confirms before taking action on your behalf. Productivity increases without the risk of embarrassing or damaging communications sent without review.

Should Your Enterprise Adopt NanoClaw 2.0?

NanoClaw 2.0 represents a shift from speculative AI experimentation to safe operational deployment. The framework suits enterprises with specific characteristics and requirements.

When Does NanoClaw Make Strategic Sense?

Organizations should consider this approach if they require high auditability and face strict compliance requirements around data access. The small, reviewable codebase and infrastructure-level security align with enterprise security practices. Companies blocked from agent adoption due to credential concerns now have a middle ground.

The principle of least privilege, already standard in IT security, extends naturally to AI agents through policy-based approval workflows. Businesses handling sensitive operations, financial transactions, or regulated data gain the productivity of automation without forgoing executive control. The agent cannot structurally act without permission, regardless of prompt engineering or model behavior.

What Are the Implementation Considerations?

The single Node.js process architecture with isolated containers allows security teams to verify that the gateway controls all outbound traffic. This provides the audit trail and oversight that compliance frameworks require. The open source MIT license lets enterprises fork and customize the codebase for specific needs.

This contrasts with proprietary agent platforms where internal security review proves impossible. The "Open Source Avengers" coalition behind the project demonstrates that modular, open-source tools can outpace proprietary labs in building practical AI application layers. NanoClaw handles orchestration, Vercel provides UI/UX, and OneCLI manages security and secrets.

What Does This Mean for AI-Native Operations?

NanoClaw launched on January 31, 2026, as a minimalist response to the security crisis in complex agent frameworks. Created by Cohen, a former Wix.com engineer, and marketed by his brother Lazer, the project addressed the auditability problems inherent in bloated codebases.

The March 2026 partnership with Docker further matured the security posture through MicroVM-based isolation. This provides enterprise-ready environments for agents that must install packages, modify files, and launch processes while maintaining container isolation. The framework natively supports "Agent Swarms" via the Anthropic Agent SDK, allowing specialized agents to collaborate in parallel while maintaining isolated memory contexts for different business functions.

This enables sophisticated multi-agent workflows without compromising security boundaries.

What Does the Future Hold for Supervised Autonomous AI?

NanoClaw 2.0 establishes the blueprint for trust management in the age of autonomous AI. The partnership proves that agents can deliver productivity without requiring blind faith in model behavior. The project has amassed more than 27,400 stars on GitHub and maintains an active Discord community.

This signals strong developer interest in security-first agent frameworks that enterprises can actually deploy. As AI-native operations become standard, the supervised autonomous model will likely dominate. Agents provide the analytical power and tireless attention of AI, while humans retain final authority over consequential decisions.

This division of labor maximizes the strengths of both. For enterprises evaluating AI agent adoption, NanoClaw 2.0 offers a practical path forward. The framework transforms AI from a potentially rogue operator into a highly capable junior staffer who always asks permission before hitting the "send" or "buy" button.

Continue learning: Next, explore codex for mac gains chronicle feature for ai context

In an era where AI capabilities advance faster than trust frameworks, this approach balances innovation with institutional risk management.

Related Articles

AI Tools Reveal Identities of ICE Officers Online

AI's emerging role in unmasking ICE officers spotlights the intersection of technology, privacy, and ethics, sparking a crucial societal debate.

Sep 2, 2025

AI's Role in Unveiling ICE Officers' Identities

AI unmasking ICE officers underscores a shift towards transparent law enforcement, raising questions about privacy and ethics in the digital age.

Sep 2, 2025

AI Reveals Identities of ICE Officers: A Deep Dive

AI's role in unmasking ICE officers sparks a complex debate on privacy, ethics, and law enforcement in the digital age.

Sep 2, 2025

Comments

Loading comments...