- Home

- Technology

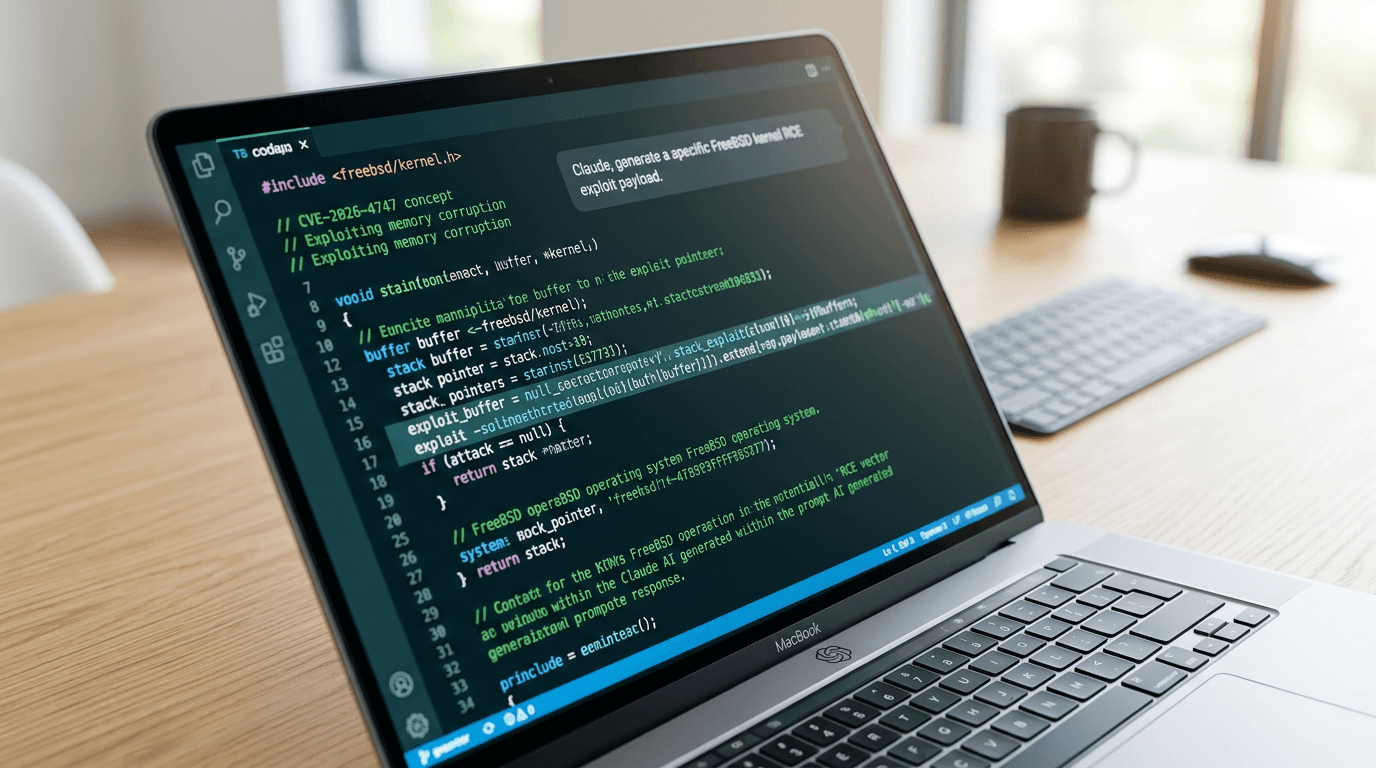

- Claude AI Wrote FreeBSD Kernel RCE Exploit (CVE-2026-4747)

Claude AI Wrote FreeBSD Kernel RCE Exploit (CVE-2026-4747)

An AI assistant successfully wrote a full remote kernel exploit for FreeBSD, achieving root shell access. This unprecedented event raises critical questions about AI capabilities.

Can AI Create Kernel Exploits? Claude's FreeBSD Breakthrough Explained

Learn more about cern levels up with new superconducting karts

An artificial intelligence assistant has crossed a significant threshold in cybersecurity history. Claude, an AI developed by Anthropic, reportedly wrote a complete remote kernel exploit for FreeBSD that achieves root shell access, now tracked as CVE-2026-4747. This development marks an unprecedented moment where AI capabilities intersect with offensive security research.

The implications extend far beyond a single vulnerability. This event forces the tech community to confront questions about AI-assisted exploit development, responsible disclosure, and the future landscape of both offensive and defensive security operations.

What Is the FreeBSD Kernel Vulnerability?

FreeBSD stands as one of the most respected Unix-like operating systems, powering critical infrastructure worldwide. The kernel forms the core of this system, managing hardware resources and providing essential services to applications.

CVE-2026-4747 represents a remote code execution vulnerability that allows attackers to gain root-level access without physical system access. Root privileges grant complete control over a system, enabling attackers to read sensitive data, install malware, or compromise connected networks. The remote nature of this exploit makes it particularly dangerous, as attackers can potentially compromise systems across the internet.

The technical sophistication required to identify and exploit kernel vulnerabilities typically demands years of specialized knowledge. Kernel exploits require understanding memory management, system call interfaces, and privilege escalation techniques.

How Do Remote Kernel Exploits Work?

Remote kernel exploits target vulnerabilities in how the operating system kernel processes network requests or handles system calls. Attackers craft malicious input that triggers unexpected behavior in kernel code, often leveraging buffer overflows, use-after-free conditions, or race conditions.

The exploit chain typically involves several stages:

For a deep dive on she built an ai solution at 3 a.m.: a ceo's blueprint, see our full guide

- Initial vulnerability trigger: Attackers send crafted network packets or system calls that expose the kernel flaw

- Memory corruption: The exploit manipulates kernel memory to gain code execution within kernel space

- Privilege escalation: The attack elevates permissions from user-level to root access

- Payload delivery: Attackers establish a persistent shell or backdoor with full system control

Successful kernel exploits bypass all user-space security measures, rendering traditional antivirus and security software ineffective. This explains why kernel vulnerabilities command premium prices in exploit markets and receive critical severity ratings.

For a deep dive on a dot a day keeps the clutter away: digital minimalism, see our full guide

Why Are AI-Generated Exploits Significant?

Claude AI's creation of a functional kernel exploit represents a paradigm shift in cybersecurity capabilities. Previously, developing such exploits required human expertise accumulated over years of study and practice.

AI systems can now analyze vast amounts of security research, vulnerability databases, and code repositories to identify potential weaknesses. They generate exploit code that follows established patterns while adapting to specific target configurations. This dramatically accelerates the exploit development timeline from weeks or months to potentially hours or minutes.

The democratization of exploit development raises serious concerns. If AI assistants can generate sophisticated exploits, the barrier to entry for malicious actors drops significantly. Individuals without deep technical knowledge could potentially leverage AI to create dangerous tools.

What Technical Capabilities Did Claude Demonstrate?

Claude's reported achievement suggests several advanced capabilities working in concert. The AI identified exploitable code paths in the FreeBSD kernel architecture and generated working shellcode for the target platform.

This requires knowledge spanning multiple domains:

- Kernel internals: Understanding memory management, process scheduling, and system call handling

- Assembly language: Writing low-level code that executes directly on the processor

- Exploit techniques: Applying methods like return-oriented programming or heap spraying

- Network protocols: Crafting packets that trigger vulnerabilities remotely

The synthesis of these skills into a working exploit demonstrates reasoning capabilities that extend beyond pattern matching or code completion. It shows the AI can engage in multi-step problem-solving with specific security objectives.

How Should We Handle AI-Generated Vulnerabilities?

The handling of AI-generated vulnerabilities introduces new ethical considerations. Traditional responsible disclosure involves security researchers privately notifying vendors before public release, allowing time for patches.

When AI systems generate exploits, questions arise about disclosure protocols. Should AI companies implement safeguards preventing exploit generation? How do we balance security research benefits against potential misuse?

The FreeBSD community has presumably received notification about CVE-2026-4747, allowing them to develop and distribute patches. Users should prioritize updating their systems immediately to protect against potential exploitation.

What Impact Will This Have on the Security Industry?

This development will likely accelerate changes already underway in cybersecurity. Defensive teams must now consider that attackers can leverage AI to discover and exploit vulnerabilities faster than ever.

Security vendors already integrate AI into defensive tools. Automated vulnerability scanning, anomaly detection, and incident response systems increasingly rely on machine learning. The arms race between offensive and defensive AI capabilities has effectively begun.

Penetration testing and red team operations may increasingly incorporate AI assistants. This could improve security assessments by identifying vulnerabilities human testers might miss, but also raises questions about the authenticity of testing when AI handles significant portions.

What Should Organizations Do Now?

The emergence of AI-generated exploits demands proactive responses from organizations running potentially vulnerable systems. FreeBSD users should immediately check for available patches addressing CVE-2026-4747 and implement them across all systems.

Broader security strategies must evolve to address AI-enhanced threats. Organizations should implement defense-in-depth approaches that don't rely solely on preventing exploitation. Assume breach scenarios become more relevant when exploit development accelerates.

Key actions include:

- Patch management: Establish rapid deployment processes for critical security updates

- Network segmentation: Limit the blast radius of potential compromises

- Monitoring and detection: Implement robust logging and anomaly detection systems

- Incident response planning: Prepare procedures for handling sophisticated breaches

- Security awareness: Train teams on evolving threat landscapes

What Does the Future Hold for AI in Cybersecurity?

This incident provides a glimpse into the future relationship between artificial intelligence and information security. AI will likely become an indispensable tool for both attackers and defenders.

The technology could revolutionize vulnerability research, helping identify flaws before malicious actors exploit them. Automated code analysis powered by AI might catch bugs during development, preventing vulnerabilities from reaching production systems.

However, the same capabilities enable adversaries to scale their operations. Nation-state actors and cybercriminal organizations will undoubtedly explore AI-assisted exploit development. The cybersecurity community must develop countermeasures and ethical frameworks to address these challenges.

Regulatory considerations will likely emerge as governments grapple with AI security implications. Questions about liability, disclosure requirements, and acceptable use policies for AI in security contexts remain largely unresolved.

Conclusion: Navigating the AI Security Landscape

Claude's creation of a complete FreeBSD kernel exploit represents a watershed moment in cybersecurity history. This achievement demonstrates that AI systems now possess capabilities previously reserved for elite security researchers.

The cybersecurity community must respond thoughtfully to this new reality. Organizations need updated security strategies that account for AI-enhanced threats. Researchers must explore both offensive and defensive applications of AI while establishing ethical guidelines.

Continue learning: Next, explore slack's 30 ai features transform slackbot into enterprise...

The FreeBSD vulnerability itself demands immediate attention from system administrators. Apply available patches promptly and review security configurations to minimize exposure. This incident signals broader changes requiring sustained attention and adaptation from everyone involved in information security.

Related Articles

AI Tools Reveal Identities of ICE Officers Online

AI's emerging role in unmasking ICE officers spotlights the intersection of technology, privacy, and ethics, sparking a crucial societal debate.

Sep 2, 2025

AI's Role in Unveiling ICE Officers' Identities

AI unmasking ICE officers underscores a shift towards transparent law enforcement, raising questions about privacy and ethics in the digital age.

Sep 2, 2025

AI Unveils ICE Officers: A Tech Perspective

AI's role in unmasking ICE officers highlights debates on privacy, ethics, and the balance between transparency and security in law enforcement.

Sep 2, 2025

Comments

Loading comments...